Laurent Giraid is a distinguished technologist at the forefront of the intersection between artificial intelligence and physical robotics. With a specialized focus on machine learning and the ethical frameworks of autonomous systems, he has spent years investigating how natural language processing can bridge the gap between human intent and machine action. His work is centered on making advanced AI accessible, moving beyond the closed-door development cycles of industrial giants to foster a more open, scientific approach to robotics. In this conversation, we explore the radical shift from manual human training to massive-scale synthetic simulation and what it means for the future of generalist robotic agents.

Traditional robotic training often requires tens of thousands of human-led demonstrations over many months. How does moving toward procedurally generated trajectories in virtual environments change the economic barriers for smaller labs, and what specific steps are taken to ensure these synthetic paths translate effectively to physical hardware?

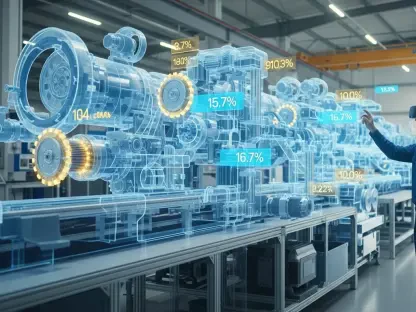

The shift to procedural generation is a complete game-changer for democratization in the field. When you look at projects like Google DeepMind’s RT-1, which required 130,000 episodes collected over 17 months by human operators, the barrier to entry is astronomical for any lab without massive capital. By using systems like MolmoSpaces, we can bypass the grueling 350 hours of human effort seen in datasets like DROID and instead generate 1.8 million expert trajectories using physics engines. To ensure this translates to the real world, we use aggressive domain randomization, where we constantly vary the objects, viewpoints, and lighting within the simulation. This forces the model to learn the underlying physics of manipulation rather than just memorizing a specific visual scene, creating a robust foundation that survives the transition to physical hardware.

Systems trained entirely on synthetic data have recently outperformed those trained on real-world demonstrations in pick-and-place tasks. What technical factors allow a model to achieve nearly 80% success with unseen objects, and how do you handle the transition to mobile manipulation tasks like opening doors?

The success we are seeing—specifically a 79.2% success rate in tabletop tasks—stems from the sheer volume and diversity of the data. When a model is exposed to nearly two million trajectories, it encounters a much wider variety of edge cases than a human operator could ever provide manually. This allows the primary model, built on the Molmo2 vision-language backbone, to generalize its understanding of geometry and friction to objects it has never seen before. For more complex mobile tasks like door opening, the policy processes multiple timesteps of RGB observations and language instructions simultaneously. This temporal awareness allows the robot to approach, grasp, and pull a door through its full range of motion with a fluidity that was previously thought to require extensive real-world fine-tuning.

Reducing the “sim-to-real gap” is often attempted by adding more physical data, but diversifying lighting, viewpoints, and physics in simulation is a different path. How do you balance aggressive domain randomization with the need for physical accuracy, and what metrics determine if a virtual world is “good enough”?

We took a calculated bet that the sim-to-real gap shrinks more effectively when you expand the diversity of the virtual environment rather than just adding a trickle of real-world data. We use the MuJoCo physics engine as our ground truth for accuracy, but then we “stress test” the model by varying every visual and dynamic parameter. A virtual world is deemed “good enough” when the model demonstrates zero-shot transfer, meaning it can perform in a physical kitchen or lab without a single second of additional training. In our evaluations, this synthetic-heavy approach allowed our models to double the success rate of models like π0.5, which relied on extensive real-world demonstrations. It proves that a “noisy” but vast simulation is often more instructive for a generalist agent than a “perfect” but limited real-world dataset.

Implementing generalist agents across different platforms like mobile manipulators or tabletop arms presents unique challenges. What are the trade-offs when choosing between a heavy vision-language backbone and lightweight transformer policies for edge computing, and how do you ensure cross-platform compatibility without any additional fine-tuning?

The choice really comes down to the available compute and the complexity of the environment. Our primary MolmoBot model uses a heavy vision-language backbone for maximum reasoning capability, but for edge environments with limited power, we developed MolmoBot-SPOC, a lightweight transformer policy with significantly fewer parameters. To ensure these policies work across different hardware, like the Rainbow Robotics RB-Y1 or the Franka FR3, we focus on a universal action space that treats the robot’s physical configuration as a set of variables rather than a fixed constraint. This flexibility is vital because it allows an organization to deploy physical AI without being locked into one specific hardware vendor or having to rebuild their software stack from scratch.

Generating over 1,000 episodes per GPU-hour allows for massive data throughput compared to human-led collection. How does this acceleration impact the traditional development cycle for physical AI, and what are the primary hurdles in managing a dataset of nearly two million trajectories during the training phase?

This level of throughput—roughly 1,024 episodes per GPU-hour—effectively means we are getting 130 hours of robot experience for every single hour of real time. This compresses a development cycle that used to take years into a matter of weeks, dramatically increasing the return on investment for robotics research. The primary hurdle isn’t the collection itself anymore; it’s the data engineering required to pipe 1.8 million trajectories into a training cluster of 100 Nvidia A100 GPUs. We have to ensure that the quality of these “expert” trajectories remains high and that the diversity is distributed evenly so the model doesn’t develop biases toward certain types of movements or objects.

Open-source stacks for training and simulation are becoming more common in the robotics field. How does providing the global research community with access to full generation pipelines and model architectures prevent vendor lock-in, and what are the practical implications for internal auditing and system adaptation?

Open-sourcing the entire stack, from the MolmoBot-Data to the generation pipelines, is essential because it shifts the power back to the researchers and away from a few wealthy industrial labs. When the architecture is transparent, an organization can perform its own internal auditing to ensure the AI behaves safely and predictably in its specific environment. It allows for rapid adaptation; if a researcher needs the robot to perform a niche task in a specialized lab, they can modify the simulation parameters themselves rather than waiting for a proprietary update. We believe that for AI to truly advance science, it must be built on shared infrastructure that anyone can test, improve, and deploy.

What is your forecast for physical AI?

I believe we are entering an era where the constraint in robotics will no longer be about how many robots we can manually train, but how creatively we can design virtual worlds. Within the next few years, we will see a surge in generalist agents that can move between homes, hospitals, and factories with zero-shot adaptability because they have already “lived” thousands of years of experience in simulation. This will lead to a massive reduction in the cost of deploying physical AI, making it a standard scientific instrument accessible to researchers globally rather than a luxury for the few. The wall between the digital and physical worlds is becoming increasingly porous, and synthetic data is the bridge that will finally allow AI to step out of the screen and into our daily lives.