The arrival of the peak shopping season serves as the ultimate truth serum for warehouse automation, stripping away the polished success of controlled trials to reveal how systems actually perform under duress. While many robotic solutions thrive in quiet, optimized pilot programs, the extreme volume and unrelenting pace of the holidays expose hidden flaws in reliability, integration, and logic. For logistics providers, this period is no longer just about meeting consumer demand; it is a critical evaluation of whether their multi-million dollar investments are production-ready or merely expensive experiments. In the high-pressure environment of a fulfillment center during a promotion cycle, a single systemic bottleneck can quickly escalate into a multi-million dollar backlog. This reality forces a shift in perspective from technical potential to operational durability, where the only metric that matters is the ability to maintain throughput when the facility is pushed to its absolute breaking point.

The High Stakes of Modern Industrial Automation

Transitioning from Trials: Real-World Demand

The current landscape of industrial automation is shifting from experimental curiosity to a cornerstone of the American supply chain, with record-breaking installations expected across the country. According to recent industry reports, the United States is poised to see a record-breaking 45,000 new industrial robot installations this year alone. This surge is not merely a quantitative increase but represents a qualitative shift in how automation is being adopted. Driven by reshoring initiatives and a growing democratization of technology that allows smaller operators to compete, automation is moving beyond the experimental phase. Large-scale adopters are simultaneously pushing the boundaries of what is possible, as seen with industry leaders like Amazon, which reached a milestone in 2025 by deploying its one-millionth robot across its global network. The transition from small-scale testing to full-scale deployment represents the next maturation phase for the logistics industry.

However, the move toward “brownfield” facilities—existing warehouses with legacy constraints—adds a layer of complexity, as these older environments are rarely optimized for the precision that digital systems require. Most automation was originally designed for “greenfield” sites where every floor joint and Wi-Fi access point is mapped to the millimeter. In a brownfield environment, robots must navigate uneven flooring, erratic lighting, and varying aisle widths that were established decades ago. This creates a high-stakes environment where the delta between “demo-ready” and “production-ready” becomes a matter of survival for the enterprise. When a company attempts to drop advanced autonomous mobile robots into a facility designed for manual pallet jacks, the friction between old-world architecture and new-world technology becomes a primary point of failure. Success in these environments requires more than just high-quality hardware; it demands a sophisticated understanding of how to adapt cutting-edge logic to the physical limitations of existing infrastructure.

Scaling for the Peak: Operational Resilience

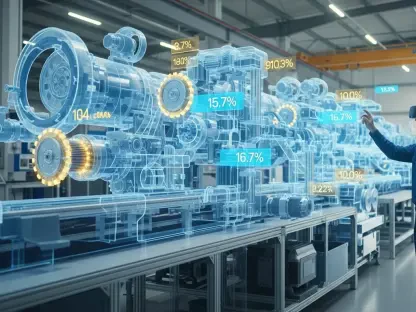

As the industry matures, the focus has shifted from whether a robot can perform a specific task to whether it can sustain that performance during the most demanding weeks of the year. During peak season, the density of traffic within a warehouse increases exponentially, creating a chaotic environment that challenges the navigation algorithms of even the most advanced autonomous systems. A robot that operates flawlessly when it has the aisle to itself may struggle when forced to navigate around dozens of human pickers and other robotic units. This “density tax” often degrades performance precisely when the facility needs it most. Engineers must now design systems that account for this congestion, ensuring that the path-planning logic remains efficient even when the environment is constantly changing. The true test of a robotic fleet is its ability to maintain its cycle times while sharing a crowded floor with a seasonal workforce that may not be fully trained on how to interact with automated machinery.

Furthermore, the financial implications of robotic downtime are magnified during the holiday rush, turning minor technical glitches into significant business risks. In a standard operational month, a service interruption might be an inconvenience, but during peak, every minute of lost production represents thousands of dollars in unfulfilled orders and potential late-delivery penalties. This pressure has led to a renewed emphasis on “preventative maintenance 2.0,” where predictive analytics are used to identify potential hardware failures before they occur. Logistics providers are increasingly looking for robotic partners who offer not just the machines, but a comprehensive support ecosystem that includes rapid response times and on-site spare parts management. The ability to scale support alongside the increased physical volume is what separates a successful automation strategy from a failed one. In this environment, the robustness of the service contract is often just as important as the specifications of the robot’s sensors or motors.

Defining Reliability Beyond the Laboratory

Managing Uptime: Hostile Warehouse Environments

True robotic reliability is often misunderstood because, in many trial runs, the robots operate in a “curated” vacuum with perfect lighting, clean floors, and uniform packaging. In a live warehouse, however, the environment is frequently hostile to sensitive electronics and precise sensors, plagued by dust, fluctuating network quality, and irregular material handling. Dust accumulation on LIDAR sensors can lead to “phantom obstacles,” causing robots to stop abruptly for no apparent reason, while dead zones in a warehouse’s wireless network can leave a fleet stranded mid-mission. When a system is only tested under ideal conditions, it lacks the resilience needed to survive the messy, unpredictable reality of a high-volume logistics hub. Companies are finding that they must invest heavily in environmental hardening, such as improved sensor shielding and industrial-grade mesh networks, to ensure that their digital workforce remains operational regardless of the physical conditions within the building.

Reliability during the peak season is redefined by how a system handles a “compounding failure” rather than the total absence of errors. In normal months, a minor one-second delay in a robot’s communication might go unnoticed, but during peak demand, that same delay repeated thousands of times creates a massive bottleneck. These micro-stoppages aggregate into a systemic slowdown that can paralyze a fulfillment center. A production-ready robotic system must be able to isolate these small technical “blips” and maintain a steady flow, ensuring that a single component’s struggle doesn’t lead to a total systemic collapse. This requires a shift toward decentralized control architectures where individual robots can make local decisions if they lose connection to the central server. By building redundancy into the software and hardware layers, operators can create a “graceful degradation” model where the system stays productive even if it is not operating at one hundred percent efficiency, preventing the dreaded total facility blackout.

The Impact of Variability: Material and Human Factors

The unpredictability of the items being handled represents another significant hurdle for robotic reliability in a live production environment. While a pilot program might use a dozen standard-sized boxes, a real-world peak season involves a dizzying array of packaging types, from polybags and bubble mailers to oversized, non-conveyable items. Sensors that were calibrated for matte cardboard may struggle with the glare of plastic shrink-wrap or the lack of structural integrity in a partially filled envelope. If a robotic arm or sorter cannot reliably detect and grasp these varied formats, the resulting drop in “first-pass yield” creates a massive amount of manual rework for the human staff. Successful automation must therefore incorporate advanced computer vision and machine learning models that have been trained on millions of real-world edge cases. The goal is to create a system that is as adaptable as a human hand and eye, capable of recognizing a product regardless of its orientation or the condition of its packaging.

In addition to material variability, the human element remains one of the most volatile factors in the reliability equation. During peak season, warehouses are flooded with temporary laborers who may not have the same level of familiarity with robotic safety protocols as the permanent staff. This leads to more frequent emergency stop activations and blocked pathways as humans and robots learn to coexist in a high-speed environment. Reliability is compromised when robots are programmed with overly conservative safety buffers that cause them to freeze whenever a human enters their peripheral vision. Modern systems are now utilizing more sophisticated “intent prediction” algorithms to differentiate between a person walking past a robot and a person stepping into its path. By refining these interactions, warehouses can maintain higher speeds without compromising safety, ensuring that the presence of a seasonal workforce does not inadvertently throttle the productivity of the automated systems.

Navigating the Chaos of Exceptions

Standardizing Exceptions: Human Roles

In any warehouse, perfection is a myth, as robots are constantly forced to deal with damaged cartons, obscured barcodes, and mismatched inventory. During peak season, the sheer volume of these “exceptions” can overwhelm a system if it hasn’t been designed to handle the “long tail” of operational anomalies. If every small error requires a highly trained engineer to step in and fix it, the automation ceases to be an efficiency tool and instead becomes a significant labor burden. This phenomenon, often called the “automation paradox,” occurs when a system designed to reduce headcount actually requires more specialized staff to keep it running. To avoid this trap, facilities are developing “designed workflows” for exception handling, where the robot can automatically divert a problematic item to a dedicated human station without stopping the rest of its tasks. This ensures that the primary flow remains uninterrupted while anomalies are resolved in parallel by the existing workforce.

Successful robotic deployment depends on creating a designed workflow that keeps human workers in the loop as efficient “exception handlers.” The goal is to move away from constant manual triage and toward a system that remains calm as variability increases. By categorizing common failure modes—such as an unreadable label or a crushed corner—and building repeatable resolution processes, companies can ensure that their robotic fleet supports the workforce. Instead of employees “rescuing” stalled machines, they become high-level supervisors who manage the flow of information and physical goods. This transition requires a shift in training and organizational culture, where warehouse associates are taught to interact with the software interfaces of the robotic system. When a human can clear an error code or redirect a bin with a few taps on a tablet, the entire operation gains the flexibility needed to weather the unpredictable storms of the peak shopping season.

Designing for Failure: Recovery and Rework

A robust automation strategy must prioritize the speed of recovery over the avoidance of failure, acknowledging that some level of error is inevitable in complex systems. During peak volume, the most critical metric for an exception handling process is the “Mean Time to Recovery” (MTTR). If a robotic sorter jams, the system should be able to automatically reroute traffic to other modules while providing clear, visual instructions to a technician on how to clear the obstruction. Systems that require a full power-cycle or a complex software reboot after a minor physical jam are simply not viable for high-throughput environments. Instead, leading-edge platforms are incorporating “self-healing” logic that can recalibrate sensors or reset motor controllers on the fly. By minimizing the duration of each interruption, the facility can maintain its overall momentum, preventing a single exception from snowballing into a facility-wide delay that impacts thousands of customers.

Furthermore, the design of the physical rework area is just as important as the software that manages the exceptions. If the area where humans fix robotic errors is poorly organized or understaffed, it will quickly become a bottleneck that stalls the entire automated loop. Forward-thinking logistics managers are treating the exception-handling station as a core part of the robotic system, applying the same lean principles to manual rework that they apply to the automation itself. This involves using “pick-to-light” systems or augmented reality overlays to help human workers quickly identify what is wrong with an item and how to fix it. When the handoff between the robot and the human is seamless, the “exception” ceases to be a crisis and becomes just another standard part of the operational flow. This level of preparation ensures that when volumes double or triple during the holidays, the team is ready to handle the increased “noise” without losing sight of the overall production goals.

Bridging the Gap with Legacy Infrastructure

Solving Data Friction: System Incompatibility

The integration of modern robotics often hits a wall when forced to communicate with “brownfield” Warehouse Management Systems (WMS) that rely on outdated batch-processing logic. While robots operate on real-time execution, these legacy systems were built for different eras, leading to friction in how orders are released and tracked. Many older WMS platforms were designed to drop thousands of orders at once for a human to pick over several hours, which clashes with a robot’s need for a constant, balanced stream of tasks. When these two philosophies clash during peak season, the manual workarounds used to bridge the gap are usually the first things to break under the pressure. To solve this, developers are building “middleware” layers that act as translators, converting the slow, batch-oriented data of the legacy WMS into the high-frequency, real-time commands required by the robotic fleet, ensuring a smoother flow of information.

A major source of this friction is the “Item Master Data,” which acts as the digital blueprint for every product in the warehouse. While a human worker can look at a box and intuitively understand its size and weight despite a missing label, a robot is entirely dependent on the accuracy of its data. Inaccurate records regarding dimensions or weight can cause a robot to fail instantly, proving that data integrity is the actual foundation upon which all successful automation is built. During the peak season, when new “seasonal-only” SKUs are added to the inventory at a rapid pace, the Item Master often becomes riddled with errors. If a robot expects a three-pound item but receives a thirty-pound one, the resulting torque fault or safety stop can halt an entire line. Solving this requires automated “cubing” stations that scan and weigh every new product as it enters the building, feeding accurate dimensions directly into the robotic control system and eliminating the reliance on flawed historical data.

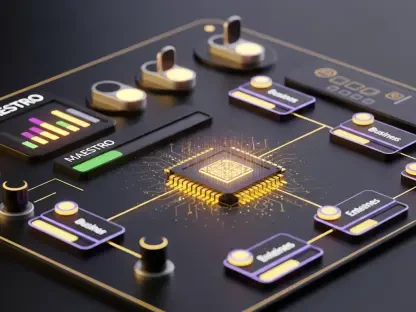

Integrating Real-Time Logic: The Future of Execution

As warehouses move toward more sophisticated automation, the role of the Warehouse Execution System (WES) has become paramount in bridging the gap between high-level planning and physical movement. A modern WES acts as the “brain” of the facility, making micro-decisions every millisecond to balance the workload across both human and robotic resources. During peak season, this system must be capable of dynamically rerouting orders based on real-time congestion and equipment availability. If a specific picking zone is becoming overcrowded, the WES should automatically divert work to a less congested area, preventing the gridlock that often plagues manual operations. This level of coordination is only possible when there is a low-latency connection between the physical robots and the central logic. Integrating these systems requires a deep dive into API architecture and data streaming, ensuring that the “digital twin” of the warehouse is a perfect, real-time reflection of the physical reality on the floor.

Ultimately, the goal of integration is to create a “frictionless” environment where data flows as freely as the physical goods. This means moving away from rigid, hard-coded integrations and toward more flexible, service-oriented architectures that can adapt to changing business needs. For instance, if a company decides to add a new type of robotic sorter three weeks before the peak season begins, the existing infrastructure should be able to absorb that new hardware with minimal reconfiguration. This “plug-and-play” capability is the holy grail of warehouse automation, but it requires a disciplined approach to data standards and communication protocols. Companies that invest in a robust digital foundation find that they can scale their operations much more effectively, using the peak season as an opportunity to test new configurations rather than just surviving the volume. In this context, the integration layer becomes a competitive advantage, allowing for a level of agility that was previously impossible in traditional logistics.

The industry moved away from evaluating robotics based on average-case flow and began evaluating them based on their performance under extreme stress. The benchmarks of success—reliability, exception handling, and system integration—served as the primary tools that turned robotic experiments into dependable industrial capabilities. Organizations that prioritized data integrity and environmental hardening found that their systems remained stable even as order volumes tripled. These companies successfully transitioned their automation from a novelty into a hardened, industrial-grade capability that could withstand the most demanding times of the year. The lessons learned during the most recent peak season provided a clear roadmap for future deployments, emphasizing that the physical and digital infrastructure of a building is just as important as the robot itself. By mastering the integration of hardware, software, and human intervention, the logistics sector finally proved that robotics could not only survive but thrive under the intense pressure of the global supply chain.