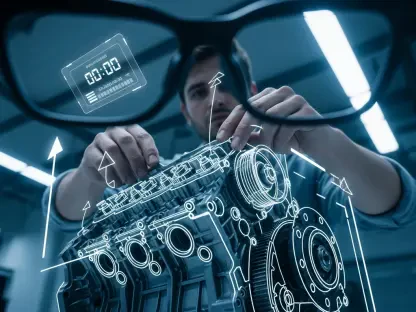

The traditional barrier between human imagination and digital execution is rapidly dissolving as software transitions from a passive set of tools into an active, conversational collaborator. Adobe is currently spearheading a major transformation in digital design by shifting from tool-centric software to intent-driven systems. This evolution centers on the integration of AI coworkers powered by the Firefly generative engine, which allows users to interact with complex software like Photoshop and Express through natural language. Instead of navigating dense menus or mastering intricate layers, creators can now provide instructions to the software as if they were speaking to a colleague. This transition aims to simplify the creative process, allowing the software to handle technical background tasks while the human user focuses on the core vision. By removing the need for specialized technical knowledge of every panel and shortcut, the system allows for a more fluid exchange of ideas where the software understands the ultimate goal.

Conversationalization of Professional Design Workflows

The cornerstone of this new approach is the conversationalization of creative workflows, where chat-based editing replaces manual adjustments. By utilizing these intelligent agents, users can bypass the friction of traditional design work, asking the AI to adjust lighting, modify layouts, or select objects automatically. This ecosystem is supported by a unified platform hosting over thirty specialized AI models, enabling a seamless transition between tasks such as photorealistic image generation and vector illustration. As these agents manage the underlying mechanics of a project, the user’s role evolves into that of a creative director who provides feedback and refines the output through ongoing dialogue. This move represents a departure from the historical requirement of pixel-perfect manual control, favoring a model where the software interprets intent and executes the heavy lifting. The result is a workflow that prioritizes conceptual thinking over the mechanical repetition of clicks and drags.

Beyond the surface-level convenience, this intent-driven model fundamentally changes how professionals interact with their digital canvas. When a designer requests a specific mood or a complex compositional change, the AI coworker does not merely apply a filter but reorganizes the internal architecture of the document. This capability ensures that the final product remains editable and structurally sound, preserving the non-destructive editing standards that professionals require for high-level production. Furthermore, the integration of multimodal AI means that a single natural language prompt can influence images, videos, and layouts simultaneously. This level of cross-functional intelligence allows for a more cohesive creative ecosystem where the boundaries between different media types become increasingly porous. By automating the technical execution of these adjustments, the system empowers creators to iterate faster, testing dozens of variations in the time it previously took to finalize a single design draft for review.

Enhancing Contextual Awareness with Project Moonlight

To further refine the collaboration between humans and machines, Adobe has introduced Project Moonlight, a private beta initiative designed to bring deeper contextual awareness to AI interactions. This project moves beyond generic content generation by learning a user’s specific aesthetic style and leveraging their existing asset libraries. By recognizing established patterns and personal preferences, the AI coworker ensures that every output remains consistent with the user’s brand or personal portfolio. This anticipatory approach helps the system transition from a reactive tool to a proactive collaborator that supports a project from initial concept to final delivery. The system analyzes the historical data of a user’s work to ensure that the generated elements do not feel like foreign additions but rather natural extensions of their established visual language. This specific focus on personalization addresses a critical gap in standard generative tools, which often produce results that lack a distinct or coherent artistic voice.

Beyond individual style recognition, this initiative focuses on cross-application synergy, allowing the AI to function cohesively across the entire Adobe ecosystem. This integration eliminates the need for users to jump between disparate tools or restart their creative process when moving from one medium to another. By maintaining brand integrity and visual consistency through user-specific data, Adobe addresses one of the primary hurdles of generative technology: the tendency to produce randomized or disconnected results. This strategic shift ensures that the AI’s contributions are not just fast, but also relevant and tailored to professional standards. The AI coworker can pull assets from a layout in InDesign to inform a video sequence in Premiere Pro, ensuring that the brand identity remains uncompromised across all channels. Such a unified intelligence layer acts as a bridge, synthesizing information from various creative disciplines into a single, coherent narrative that respects the unique constraints of each output format.

Orchestrating the Future of Creative Production

The move toward autonomous interfaces reflects a broader industry trend of platform unification, where the boundaries between graphic design, video editing, and document management begin to blur. Adobe positions its AI coworkers as assistants rather than replacements, focusing on the automation of repetitive, low-value tasks such as file preparation and routine technical edits. This strategy is intended to reduce mechanical friction, significantly shortening the iteration cycle and allowing designers to explore a wider range of creative possibilities in a fraction of the time. While these advancements democratize high-end tools for non-experts by lowering the barrier to entry, they also offer significant efficiency gains for seasoned professionals. The challenge lies in balancing this high-level automation with the granular control that professional environments demand. By integrating multimodal AI that can influence various media types simultaneously, the industry is seeing a total reimagining of the human-computer interface into something more intuitive.

The implementation of these intelligent systems suggested that the creative professional’s role transitioned from a manual technician to a high-level orchestrator of adaptive systems. Organizations that successfully integrated these AI coworkers into their internal workflows realized significant reductions in production lead times. To capitalize on this shift, creators focused on mastering the art of prompt engineering and strategic direction rather than mere technical proficiency. Those who treated the AI as a junior partner were able to maintain a higher volume of output without sacrificing the unique brand characteristics that defined their work. Looking forward, the emphasis moved toward building proprietary asset libraries that the AI could reference to ensure total stylistic alignment. This strategic pivot ensured that the human element remained the primary driver of innovation, while the machine handled the labor-intensive mechanics of the process. Professionals who embraced this hybrid model effectively secured their place at the forefront of the new digital economy.