The promise of a revolutionary shift in personal computing began in earnest during 2024 with the arrival of dedicated Neural Processing Units, yet many users today find these specialized chips sitting dormant while traditional processors continue to bear the heavy lifting of daily tasks. When industry leaders like Microsoft and Intel first introduced the “AI PC” concept, the narrative centered on a fundamental change in how silicon interacts with software, moving away from general-purpose computing toward a more specialized, intelligent architecture. These machines were marketed as a necessity for the modern professional, promising that the inclusion of a Neural Processing Unit, or NPU, would unlock capabilities previously reserved for massive cloud server farms. However, as the initial excitement has transitioned into long-term usage patterns, the discrepancy between high-level marketing claims and the mundane reality of desktop performance has become increasingly difficult to ignore for technical analysts and casual consumers alike. This movement was supposed to mark the end of the traditional laptop era, but the current landscape suggests that the transition to truly intelligent local hardware is proving to be a much slower and more complicated journey than the glossy brochures originally suggested.

The Strategic Shift Toward Localized Intelligence

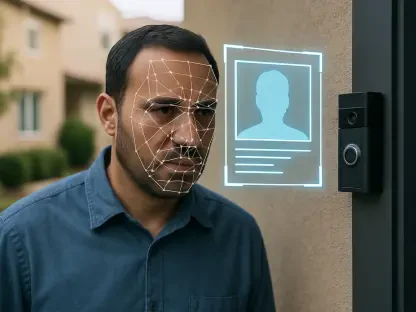

The core of the initial marketing campaign for AI-ready hardware was built upon the idea that local, on-device intelligence would liberate users from the latency and privacy concerns associated with cloud-based computing models. For several years, the most advanced artificial intelligence applications required data to be transmitted to remote servers, processed, and then sent back, a cycle that introduced significant delays and raised serious questions regarding data sovereignty. To address this, hardware manufacturers proposed integrating NPUs capable of exceeding 40 Tera Operations Per Second, a benchmark intended to turn every laptop into a private, autonomous processing powerhouse. The vision was built on three foundational pillars: enhanced security by keeping sensitive data on the device, improved battery life by offloading mathematical tensor operations to efficient silicon, and the enabling of real-time features like instant translation that would be impossible over a standard internet connection. This paradigm shift was presented not as an incremental upgrade, but as a total reimagining of the personal computer’s role in a professional environment.

Building on this technological foundation, Microsoft introduced the Copilot+ suite as the definitive software ecosystem designed to justify the existence of these high-performance NPUs. This suite included flagship features intended to transform the user experience, such as a photographic memory function that indexed every interaction on the screen and advanced video conferencing tools that adjusted the user’s gaze in real time. The marketing emphasized that the NPU would serve as a dedicated brain, managing these persistent background tasks with surgical precision while leaving the Central Processing Unit and Graphics Processing Unit entirely free to handle intensive creative applications or gaming. This specialization was supposed to create a seamless synergy where the computer anticipated the user’s needs without ever sacrificing system responsiveness or draining the battery. By framing the NPU as a critical component for these next-generation experiences, manufacturers successfully created a new category of premium hardware, though the actual utility of these features remained largely dependent on a software ecosystem that was still in its earliest stages of development.

Analyzing the Persistent Hardware and Software Disconnect

Despite the impressive physical specifications of the silicon currently residing in millions of laptops, the practical experience of owning an AI PC has been defined by a striking lack of software that actually utilizes the specialized hardware. For the vast majority of users, the NPU remains almost entirely idle during a typical workday, frequently showing zero percent utilization in system monitoring tools even while the user interacts with various AI-driven tools. This stagnation is the result of a significant gap between hardware availability and software optimization, as developers have struggled to keep pace with the rapid release cycles of new chip architectures. Most popular consumer-grade AI applications, including local large language model runners and image generation tools, still default to using the GPU or CPU because those processors offer broader compatibility and established development frameworks. The specialized nature of the NPU means that code must be written specifically for its unique instruction set, a task that requires significant resources that many software houses have been hesitant to commit.

Furthermore, the economic reality for software developers presents a classic chicken-and-egg dilemma that has slowed the adoption of NPU-specific optimizations across the industry. While millions of NPU-equipped chips have been shipped since the movement began in 2024, the installed base of machines capable of meeting the high performance thresholds required for the most advanced features is still relatively small compared to the total global PC market. For a developer, the return on investment for optimizing an application for a specific NPU architecture is often insufficient when the same application can run “well enough” on a standard GPU that is present in nearly every modern high-end machine. This has led to a situation where the hardware is technically capable of revolutionary performance, but it lacks the necessary software bridges to translate that potential into tangible user benefits. As a result, many consumers find themselves paying a premium for specialized silicon that effectively functions as “dark silicon,” taking up physical space on the motherboard without contributing to the overall speed or capability of the system in any meaningful way.

Evaluating Technical Merit Against Market Claims

The narrative regarding the absolute necessity of the NPU was further complicated by the controversial rollout of the flagship “Recall” feature, which served as a case study in the tension between marketing requirements and technical reality. Originally marketed as a feature that strictly required the latest NPU hardware to function, Recall was intended to demonstrate the power of local indexing and semantic search. However, shortly after its announcement, the tech community discovered that the feature could be modified to run on older hardware that lacked an NPU entirely, albeit with higher power consumption. This revelation suggested that the strict hardware requirements touted by manufacturers were perhaps more about creating an artificial barrier to drive new laptop sales than they were about meeting a hard technical limitation. When combined with significant privacy vulnerabilities that allowed unauthorized access to the indexed data, the feature that was supposed to be the ultimate justification for the AI PC instead became a source of skepticism and a major public relations hurdle for the entire movement.

In addition to the software controversies, objective performance benchmarks have cast a long shadow of doubt on the supposed efficiency gains that were promised during the initial NPU marketing blitz. One of the most common claims was that offloading background tasks, such as blurring a webcam background during a video call, would lead to a revolutionary leap in battery life compared to using a traditional GPU. However, empirical testing in controlled environments has shown that the actual power savings are often much smaller than the marketing would suggest. In many scenarios, offloading a persistent task to the NPU resulted in a power draw reduction of only about 1.3 Watts compared to using an integrated GPU. While any efficiency gain is technically positive, such a marginal improvement is hardly noticeable to the end-user and certainly does not justify a significant price premium for most consumers. For a professional who already owns a capable machine with a modern processor, the data suggests that the NPU currently offers diminishing returns that fail to meet the lofty expectations set by industry hype.

Current Functional Realities and Long-Term Prospects

While it is clear that the NPU has not yet transformed the computing landscape as quickly as promised, it would be reductive to claim the hardware is entirely without merit in the current ecosystem. In its current state, the NPU is highly effective at managing a specific, narrow set of passive tasks that happen in the background of a standard Windows 11 environment. Features like Windows Studio Effects, which include automatic framing and eye-contact correction, run efficiently on the NPU without taxing the main system resources. Similarly, the hardware provides reliable support for real-time captions and semantic searching within the native photo and file management applications. These functions, while useful, are largely incremental improvements to existing workflows rather than the transformative, life-changing experiences that were promised. They represent a “nice-to-have” addition to a modern laptop, but they rarely constitute a primary reason for a user to upgrade from a machine that is only a few years old and still performs well in traditional tasks.

Looking forward, there is a legitimate argument for the NPU as a “future-proofing” component that may eventually find its true purpose as AI models become more compact and developer tools become more standardized. As software techniques like quantization allow complex models to run on less memory and lower-power hardware, the NPU may eventually become the primary engine for a new generation of local digital assistants and creative tools. We are seeing the very beginning of this transition as more developers experiment with cross-platform frameworks that aim to make NPU optimization easier and more accessible. However, the current consensus remains that we are still in the early stages of this technological evolution, and the “AI PC” label currently functions more as a placeholder for a future that has not yet fully arrived. For the time being, the NPU is a piece of silicon waiting for its “killer app,” a dormant asset that has potential but lacks the immediate utility required to live up to the intense marketing pressure of the last two years.

Strategic Recommendations for Future Hardware Procurement

The evaluation of the AI PC movement revealed that the gap between marketing rhetoric and technical reality was wider than many consumers anticipated. During the initial wave of adoption, the emphasis on Neural Processing Units successfully created a new market segment, yet the actual performance gains for most users remained confined to niche background tasks. It was observed that the software ecosystem lagged significantly behind the hardware development, leaving powerful silicon underutilized in the majority of professional and creative workflows. Security concerns and the discovered ability to run “exclusive” features on older hardware further eroded the argument that these machines were a mandatory upgrade for the average user. Consequently, the lessons learned from this period suggest that hardware specialization alone cannot drive a paradigm shift without a simultaneous and robust commitment from the broader software development community to optimize for these new architectures.

Moving forward, stakeholders and individual buyers should prioritize actual software compatibility and performance benchmarks over high-level marketing metrics like TOPS when selecting new equipment. It was found that for most people, a high-quality GPU and a modern CPU still provided more immediate value and versatility than a dedicated NPU with limited software support. Future procurement strategies should focus on machines that offer a balanced approach to processing power, rather than those that carry a heavy price premium for AI-specific branding. Developers are encouraged to adopt cross-vendor frameworks that simplify NPU utilization, which will eventually bridge the utility gap. For the end-user, the best course of action is to treat the inclusion of an NPU as a secondary benefit rather than a primary purchasing driver until the software market matures. By focusing on tangible productivity gains rather than speculative future features, consumers can avoid the pitfalls of the initial marketing hype and make more informed investments in their technological infrastructure.