The rapid evolution of artificial intelligence has moved beyond the traditional confines of desktop integrated development environments to redefine how engineers interact with code on the move. While the initial wave of AI-assisted development was primarily tethered to high-performance workstations and robust text editors like VS Code, the demand for mobile-first productivity tools has accelerated the release of specialized software development kits. This transition marks a critical turning point where the sophisticated agentic capabilities previously reserved for cloud-based IDEs are now accessible to third-party mobile applications. Developers are no longer restricted to using pre-built interfaces; instead, they can embed advanced reasoning, planning, and tool orchestration directly into custom React Native or native mobile environments. This shift allows for the creation of niche applications, such as specialized issue triaging tools or real-time repository auditors, that maintain the same level of intelligence as their desktop counterparts while offering the flexibility of a handheld device.

Establishing a Robust Backend Foundation

The Necessity of a Backend Proxy Architecture

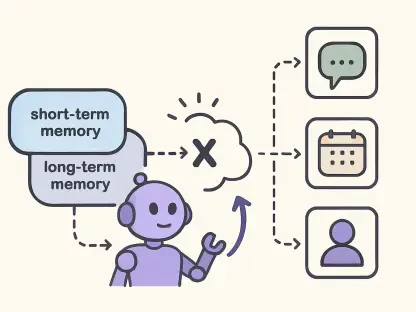

A fundamental challenge in bringing enterprise-grade AI to mobile platforms lies in the underlying technical requirements of the SDK, which typically demands a Node.js runtime and specialized CLI binaries. Because mobile operating systems like iOS and Android do not natively support the execution of these server-side components, engineers must adopt a backend-first architectural strategy to bridge the gap. By implementing a dedicated intermediary server, the mobile application can communicate via standard API calls while the server handles the complex JSON-RPC communication required by the SDK. This approach ensures that the resource-heavy lifting of the AI agent runtime is isolated from the mobile client, preserving battery life and maintaining a responsive user interface. Furthermore, this proxy model simplifies the integration process by allowing developers to utilize familiar web technologies to manage the communication between the mobile front end and the sophisticated AI backend.

The decision to utilize a server-side architecture is driven by more than just technical necessity; it serves as a critical layer for maintaining security and operational efficiency. Hosting the SDK on a centralized server allows developers to safeguard sensitive API credentials and enterprise tokens, ensuring that they are never exposed on the client side where they could be intercepted. Additionally, a centralized backend provides a single point for logging, monitoring, and debugging, which is essential when managing the non-deterministic nature of AI interactions. This centralized management also allows for the implementation of shared session states, where a developer could theoretically start a task on a mobile device and seamlessly transition to a desktop environment. By abstracting the SDK complexity away from the mobile device, organizations can deliver a consistent and secure experience across various hardware platforms without compromising the integrity of the underlying AI models or the sensitive codebase.

Streamlining the JSON-RPC Communication Pipeline

Effective integration requires a deep understanding of the communication protocol that governs the interaction between the host application and the AI agent. The SDK operates through a structured JSON-RPC interface, where commands are sent to a persistent binary process that manages the lifecycle of the AI session. In a mobile context, the backend server acts as the primary orchestrator, receiving requests from the React Native application and translating them into the specific format required by the CLI binary. This bidirectional pipeline must be highly optimized to minimize latency, as any significant delay in the “send and wait” cycle can lead to a disjointed user experience. Developers often implement long-polling or WebSocket connections to maintain a persistent link, ensuring that the mobile client is immediately notified when the AI agent has completed a complex planning or execution task. This level of synchronization is vital for maintaining the flow of developer productivity.

Beyond the basic transmission of data, managing the communication pipeline involves handling the nuances of asynchronous task execution and error recovery. When a mobile user initiates a command—such as requesting a summary of a complex pull request—the backend must manage the state of that request through various stages of completion. This includes handling potential timeouts, network interruptions, or rate-limiting responses from the underlying language models. By implementing robust queueing mechanisms and retry logic on the server, developers can ensure that the mobile application remains resilient even when the AI service encounters external friction. This focus on communication stability allows the mobile interface to focus on presenting information clearly while the backend handles the intricate dance of initializing services, creating sessions, and teardowns. The result is a seamless experience that feels native to the mobile platform despite the heavy infrastructure supporting it from behind the scenes.

Maximizing Performance and Contextual Depth

Lifecycle Management and Resource Optimization

Strict adherence to session lifecycle management is the cornerstone of a stable mobile AI integration, particularly when dealing with the limited resources of mobile-connected backends. Every interaction with the SDK follows a rigorous sequence that begins with service initialization and concludes with a definitive teardown process. Neglecting to explicitly call disconnect and stop commands once a task is completed can lead to severe memory leaks and the accumulation of orphaned processes on the server. These leaks not only degrade the performance of the backend but also increase operational costs as unused resources continue to consume compute cycles. Therefore, developers must implement automated cleanup routines that trigger whenever a user closes the application or a session times out. This disciplined approach to resource management ensures that the system remains lean and capable of handling high volumes of concurrent requests from multiple mobile clients.

Optimizing the performance of the AI integration also involves strategic caching and intelligent data handling to avoid redundant processing. In a mobile environment where data usage and speed are paramount, the application should avoid requesting a full AI analysis for every single interaction. By implementing a caching layer on the backend, developers can store previously generated summaries or task plans, serving them instantly if the underlying repository data has not changed. This not only improves the perceived speed of the application but also significantly reduces the number of API calls made to the AI service, leading to substantial cost savings. Furthermore, cost management can be refined by implementing “on-demand” intelligence, where the SDK is only engaged for complex reasoning tasks while simpler data retrieval is handled by standard REST APIs. This hybrid approach balances the power of the AI with the efficiency of traditional mobile development practices.

Refining Contextual Accuracy Through Engineering

The quality of the insights provided by the integrated SDK is directly proportional to the quality of the context provided during the prompt engineering phase. Rather than sending raw, unfiltered text to the AI agent, developers must curate the input using structured metadata and specific repository attributes to ensure accuracy. For instance, when a mobile app is triaging issues, providing the model with repository labels, contributor history, and recent commit activity allows the AI to generate much more relevant and actionable summaries. This structured approach prevents the model from hallucinating or providing generic advice, turning the mobile tool into a genuine assistant that understands the specific nuances of the project. Effective prompt engineering essentially serves as a filter that focuses the AI’s vast reasoning capabilities on the specific problem at hand, making the resulting output far more valuable to the end user.

Reliability in the intelligence layer also requires a strategy for graceful degradation in the event of service unavailability or authorization errors. In scenarios where the AI service returns a 403 error or fails to respond within a set window, the mobile application should be programmed to revert to a manual, metadata-based summary. This ensures that the user is never left with a blank screen or a cryptic error message, maintaining the utility of the tool even in suboptimal conditions. By designing the application to provide a “baseline” level of information using standard data, and then layering the AI insights on top as they become available, developers create a robust system that prioritizes user experience above all else. This philosophy of resilience is essential for professional-grade tools where developers rely on the application to perform critical tasks regardless of the underlying service status. This layered approach to intelligence ensures that the mobile application remains a dependable part of the developer’s daily workflow.

Implementing Strategic Enhancements

The successful integration of the SDK into a mobile environment was completed by focusing on the separation of concerns between the mobile client and the backend infrastructure. Developers prioritized the implementation of a server-side proxy to handle the Node.js requirements, which effectively secured API credentials and provided a stable environment for the CLI binary to operate. This architectural choice facilitated a smooth JSON-RPC communication flow and enabled the use of advanced lifecycle management to prevent memory leaks. Furthermore, the application of structured metadata within the prompt engineering process significantly improved the contextual relevance of the AI’s output. By incorporating graceful degradation, the system remained functional even during service interruptions, ensuring a consistent user experience. Moving forward, teams should consider expanding these patterns to other specialized domains such as automated documentation or security auditing. The focus must remain on optimizing the cache layers to further reduce latency and operational costs while exploring new ways to utilize agentic reasoning in mobile-specific workflows.