The physical limitations of silicon are currently clashing with the boundless ambitions of software engineers who seek to create autonomous agents capable of digesting entire libraries in a single breath. As Large Language Models transition from simple text generators into sophisticated, autonomous agents, they are encountering a formidable physical barrier known as the Key-Value cache bottleneck. Every word an artificial intelligence “remembers” during a complex, multi-stage conversation consumes a specific slice of expensive high-speed memory. This phenomenon creates a performance tax that grows heavier with every sentence processed, forcing a difficult choice between model intelligence and operational cost.

Google Research has recently introduced TurboQuant to address this crisis, marking a pivotal shift in how the industry handles massive datasets. This software-only suite promises to break the cycle of hardware dependency by shrinking the memory footprint of AI context by a factor of six without sacrificing accuracy. By reimagining the fundamental mathematics of data storage, this breakthrough offers a path toward more sustainable and scalable intelligence. It represents a move away from the “brute force” scaling of hardware toward a more elegant, algorithmic solution that maximizes existing infrastructure.

The Exponential Memory Tax: Why Modern Artificial Intelligence Is Hitting a Wall

The rapid evolution of generative models has led to a surge in demand for High Bandwidth Memory, creating a scarcity that threatens to stifle innovation. Modern models require vast amounts of Video RAM to keep track of long-form reasoning and the intricate details of extended conversations. As these systems move toward becoming true digital assistants, the “memory tax” associated with maintaining context has become a significant financial and technical burden for developers. The industry has reached a point where the cost of hardware often outweighs the value of the insights generated by the AI itself.

This hardware crisis is particularly evident in the enterprise sector, where the need for privacy and speed necessitates local or dedicated cloud deployments. Every additional token stored in the context window increases the strain on the GPU, eventually leading to a hard limit where the system can no longer process new information without discarding old data. This limitation prevents models from reaching their full potential as research tools or data analysts. Without a fundamental change in how data is represented in memory, the dream of truly expansive, long-context AI would remain an expensive luxury for only the largest technology firms.

The emergence of TurboQuant signals a departure from the traditional reliance on increasing physical memory capacity. Instead of waiting for the next generation of more expensive chips, the focus has shifted toward mathematical compression that allows current hardware to perform tasks previously thought impossible. This approach not only lowers the entry barrier for smaller organizations but also reduces the carbon footprint associated with massive AI data centers. By optimizing the “digital cheat sheet” that models use to stay focused, the industry can finally move past the memory wall that has hindered progress for several development cycles.

Understanding the Key-Value Cache Bottleneck: The High Cost of Context

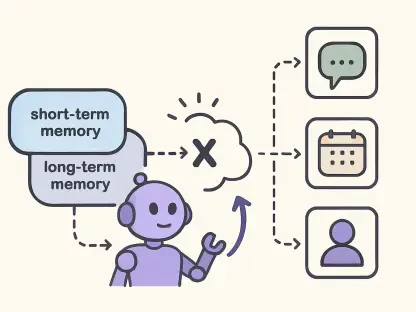

To maintain the thread of a long-form interaction, Large Language Models utilize a mechanism called the Key-Value cache, which essentially functions as a high-speed workspace within the VRAM. This cache stores the mathematical representations of every word and concept encountered during a session, allowing the model to “pay attention” to relevant information from earlier in the text. However, as the context window expands to accommodate legal briefs, medical records, or multi-day chat histories, the cache size grows exponentially. This growth quickly exhausts the available memory on even the most advanced hardware, leading to slower response times and increased operational overhead.

The shift toward “Agentic AI”—systems that act independently to solve complex problems—has further exposed the vulnerabilities of current GPU architectures. These agents often need to browse through hundreds of pages of documentation or analyze thousands of lines of code simultaneously. For enterprises, this creates a situation where cloud computing bills become astronomical due to the constant need for specialized memory clusters. The inability to store this information efficiently means that many advanced features are either too slow for practical use or too expensive to deploy at scale for a broad user base.

Furthermore, the bottleneck impacts the democratization of artificial intelligence by keeping the most capable models locked behind expensive cloud subscriptions. Locally hosted AI agents, which offer superior privacy and customization, are often crippled by the memory requirements of long-form context. Small businesses and individual researchers find themselves unable to run state-of-the-art models on consumer-grade hardware like a high-end workstation or a compact server. TurboQuant addresses this specific pain point by providing a way to compress the cache without losing the logical threads that define intelligent behavior.

The Mathematical Architecture: How TurboQuant Reinvents Vector Compression

The secret to the efficiency of TurboQuant lies in a sophisticated software-only architecture that fundamentally changes how data is compressed. Unlike traditional quantization methods, which often lead to “leaky” logic or increased model hallucinations, this system employs a two-stage approach to ensure absolute precision. The first pillar of this architecture is PolarQuant, a method that moves away from traditional Cartesian coordinates to map data vectors into polar coordinates, focusing on radius and angles. By applying a random rotation to the data, the system makes the distribution of these angles highly predictable and uniform.

This geometric shift allows the model to compress data into a fixed grid, which significantly reduces the need for “normalization constants.” In typical compression schemes, these constants act as metadata overhead, telling the model how to decompress each block of data, but they also consume valuable memory themselves. By making the data distribution predictable, TurboQuant eliminates this metadata tax, allowing for 1-bit or 2-bit compression that is far more efficient than previous attempts. This ensures that the compressed information occupies the smallest possible space while remaining perfectly accessible to the model’s processing core.

To catch any potential remaining errors and maintain the highest levels of accuracy, the system employs a 1-bit Quantized Johnson-Lindenstrauss transform. This second stage acts as a mathematical safeguard, reducing residual values to a simple sign bit that preserves the core “meaning” of the data. This ensures that the attention scores—the mechanism the AI uses to focus on relevant information—remain statistically identical to the uncompressed, high-precision versions. This dual-layered approach creates a robust shield against the logic degradation that typically plagues low-bit systems, ensuring that the AI remains as sharp as ever despite the smaller footprint.

Validating Performance: Accuracy and Speed at Enterprise Scale

The true test of any compression algorithm is whether the AI maintains its ability to understand and retrieve information from the data it has shrunken. TurboQuant has undergone rigorous benchmarking using the “Needle-in-a-Haystack” test, which requires a model to find a specific fact buried deep within a 100,000-word context. In evaluations involving popular open-source models like Llama-3.1 and Mistral, the system achieved a perfect 100% recall score. This level of precision proves that the compression process does not come at the cost of the model’s fundamental cognitive abilities or its capacity for deep retrieval.

Beyond just saving memory, the implementation of this technology acts as a massive performance accelerator for modern hardware. On high-end NVIDIA #00 GPUs, the 4-bit implementation of TurboQuant delivered an eightfold speed boost in computing the attention logs required for long-context tasks. This makes the system an ideal solution for real-time applications such as live data retrieval and semantic search, where speed is just as critical as storage capacity. The ability to process more information faster without needing more hardware represents a significant leap forward in operational efficiency for data-heavy industries.

The practical validation of these results has also been seen in high-dimensional semantic search tasks. When compared to existing state-of-the-art methods like Product Quantization, TurboQuant provided higher recall ratios and required virtually zero indexing time. This suggests that the algorithm is not merely a niche tool for researchers but a versatile engine capable of powering the next generation of enterprise search and retrieval systems. The combination of memory savings and raw speed makes it a compelling choice for organizations looking to scale their AI capabilities without incurring prohibitive costs or latency issues.

Strategic Framework: Implementing Efficiency in the Corporate Ecosystem

The release of TurboQuant provides a clear blueprint for organizations to maximize their current hardware investments while preparing for more advanced applications. By integrating this technology into production inference pipelines, companies can significantly reduce the number of GPUs required to serve complex, long-context tasks. This shift allows for a potential reduction in cloud compute costs by over 50%, enabling a more sustainable and profitable scaling of AI-driven services. For companies managing large fleets of servers, these marginal gains in memory efficiency translate into millions of dollars in annual savings.

One of the most transformative aspects of this framework is the democratization of local and edge deployment. The efficiency gains make it feasible to operate sophisticated models on consumer-grade hardware, effectively narrowing the gap between centralized cloud power and private, locally-hosted intelligence. This is particularly valuable for sectors like healthcare, law, and finance, where data privacy is paramount and the ability to process long documents locally provides a distinct competitive advantage. It allows for the creation of private digital assistants that can handle sensitive information without ever sending data to an external server.

Furthermore, the “data-oblivious” nature of the algorithm means that it can be applied to existing, fine-tuned models without the need for expensive and time-consuming retraining. Enterprises can take their specialized models, which have been trained on proprietary data, and apply TurboQuant immediately to gain the benefits of extreme memory efficiency. This plug-and-play capability ensures that custom knowledge is retained while the model becomes significantly leaner and faster. It provides a strategic path forward for organizations that have already invested heavily in model training and want to optimize their deployment phase for the current market demands.

The introduction of TurboQuant effectively shifted the paradigm of artificial intelligence development from a quest for raw power to a focus on structural efficiency. It demonstrated that sophisticated mathematical transforms could overcome the physical limitations of high-bandwidth memory, allowing for much longer context windows on existing silicon. By providing a clear methodology for 1-bit and 4-bit compression, the research team offered a way to run high-performance models on hardware that was previously considered insufficient. This advancement encouraged developers to move toward a future where intelligence is defined by the quality of memory management rather than the quantity of available VRAM.

Strategic decision-makers began integrating these compression techniques into their long-term infrastructure plans to mitigate the rising costs of high-end hardware. They recognized that the ability to handle massive datasets with minimal memory overhead would be the primary driver of adoption for the next generation of autonomous agents. The successful implementation of these tools fostered a more sustainable ecosystem where both small developers and large enterprises could compete on a more level playing field. As a result, the industry focused on building smarter, more resilient systems that maximized every byte of available data, ensuring that the benefits of advanced AI remained accessible to a global audience.