The rapid expansion of distributed computing systems has pushed traditional data processing methods to their absolute breaking point, necessitating a fundamental shift toward more structured and repeatable architectural frameworks. As engineering teams grapple with the sheer volume and velocity of modern information, the release of comprehensive practitioner manuals like Sundararajan’s work on design patterns provides a much-needed roadmap for navigating this complexity. Instead of reinventing the wheel with every new project, professionals are now looking toward established blueprints that address the complete lifecycle of data, from initial ingestion to final storage and sophisticated transformation. This movement toward standardization is not merely a convenience but a critical requirement for maintaining the integrity of pipelines that fuel global decision-making processes. By adopting a pattern-driven curriculum, organizations can bridge the gap between abstract theoretical concepts and the rigorous demands of production-grade implementation, ensuring that every layer of the tech stack contributes to a cohesive and resilient whole.

Systematic Approaches to Pipeline Architecture

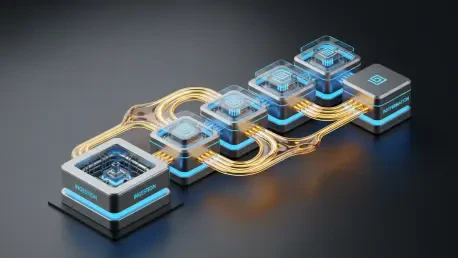

Building on the foundation of structured workflows, the emphasis in modern data engineering has pivoted from simple code execution to the deployment of repeatable architectural designs. This transition is characterized by a move away from fragile, custom-built scripts toward modular systems that can handle both batch and stream processing with equal efficiency. In practice, this means engineers must master specific design patterns that dictate how data flows through various stages of the pipeline without introducing bottlenecks or points of failure. The use of robust frameworks allows for a high degree of consistency, which is essential when multiple teams are working on a shared data platform. By focusing on the underlying patterns of data movement and storage, architects can create systems that are not only easier to maintain but also significantly more adaptable to changing business requirements. This structured approach effectively minimizes the technical debt that often accumulates when individual developers apply disparate techniques to solve similar problems across different projects.

The implementation of these patterns often relies on a sophisticated technology stack, where tools like Apache Spark play a central role in managing large-scale processing tasks across distributed environments. For instance, the use of Spark enables the execution of complex transformations that would be prohibitively slow or resource-intensive under traditional paradigms. By leveraging the parallelization capabilities of such platforms, engineering teams can ensure that their pipelines remain scalable even as data volumes grow exponentially. However, the true value of these tools is only realized when they are integrated into a broader architectural strategy that prioritizes reliability and performance. This involves not just the selection of the right software but the application of specific patterns for error handling, data partitioning, and resource allocation. As the industry moves toward these standardized methodologies, the distinction between a simple coder and a professional data architect becomes increasingly clear, with the latter focusing on the long-term stability and efficiency of the entire ecosystem rather than just the immediate task at hand.

Integrating Real-Time Intelligence and Operational Excellence

Integrating real-time intelligence into modern data platforms requires a sophisticated orchestration layer and a commitment to low-latency communication through technologies like Kafka. This shift toward streaming data patterns allows businesses to respond to events as they occur, rather than waiting for nightly batch cycles to complete. However, managing such high-velocity environments introduces unique challenges in terms of state management and event ordering, which can only be addressed through proven design patterns. To maintain order amidst this complexity, orchestration tools like Airflow serve as the connective tissue, scheduling and monitoring complex workflows to ensure that every dependency is met in the correct sequence. The combination of Kafka for ingestion and Airflow for management creates a powerful framework for building responsive and reliable data products. This integration ensures that the data remains consistent and accessible, regardless of whether it is being processed in real-time or through more traditional batch methods. Ultimately, the successful deployment of these technologies hinges on the engineer’s ability to apply structured patterns that harmonize disparate components into a unified system.

Beyond the technical mechanics of data movement, a significant focus has emerged around the concepts of DataOps and observability, particularly in high-stakes industries such as finance and healthcare. In these sectors, the cost of data inaccuracy or pipeline failure is exceptionally high, making the inclusion of telemetry and automated governance a non-negotiable aspect of system design. Modern engineering patterns now incorporate these elements directly into the pipeline architecture, providing real-time insights into the health and performance of the data flow. This level of visibility is essential for maintaining regulatory compliance and ensuring that data integrity is preserved throughout the entire lifecycle. By embedding observability into the core of their designs, teams can proactively identify and resolve issues before they impact downstream consumers or lead to costly errors. This shift toward operational excellence reflects a broader trend in the industry, where the focus is no longer just on moving data from point A to point B, but on doing so with a level of transparency and reliability that was previously unattainable in older legacy environments.

Practical Strategies for Future Data Resilience

Translating sophisticated architectural theory into deployable artifacts is perhaps the most critical skill for a modern data professional seeking to reduce common failure modes in long-running workflows. This process involves a deep dive into the nuances of ETL and ELT layers, where the choice between transforming data before or after it reaches the warehouse can have profound implications for system performance. By utilizing actionable recipes and established patterns, teams can synthesize these complex layers into a cohesive narrative that manages the inherent complexities of modern data platforms. The focus remains on production-grade stability, ensuring that every component of the pipeline is designed with fault tolerance and scalability in mind. This practical approach moves the conversation away from theoretical research and toward the actual implementation of systems that can survive the rigors of real-world usage. For practitioners, the goal is to create a robust foundation that supports not only current analytics needs but also the increasingly sophisticated demands of machine learning and large-scale data science initiatives across various organizational levels.

The adoption of standardized design patterns represented a pivotal moment for those seeking to master the intricacies of modern data engineering. Professionals who prioritized these structured frameworks successfully reduced the occurrence of pipeline degradation and improved the overall reliability of their systems. It was found that integrating comprehensive observability and DataOps practices directly into the architecture allowed teams to mitigate risks associated with high-stakes data environments. Moving forward, the focus shifted toward refining these patterns to accommodate the nuances of hybrid-cloud environments and decentralized data meshes. Organizations that invested in a pattern-driven culture effectively bridged the gap between rapid development and long-term operational stability. The emphasis remained on building modular, reusable components that could be adapted as new technologies emerged, rather than relying on static solutions. By documenting and implementing these repeatable designs, architects ensured that their data platforms remained resilient, scalable, and capable of delivering consistent value in an increasingly data-dependent global economy.