In the shifting landscape of cloud-native infrastructure, the rise of autonomous AI agents is forcing a radical reimagining of how we define security boundaries. While traditional containers were built on the principle of immutability—where code is packaged once and remains unchanged—AI agents demand the exact opposite: the ability to mutate, install dependencies, and execute open-ended tasks in real-time. This tension between flexibility and safety is the central challenge for modern enterprises. As platforms move toward “many-agent” architectures, the focus is shifting from simple software-level guardrails to hardened runtime environments that can absorb the unpredictability of autonomous code without risking the integrity of the host system.

Traditional container models often prioritize immutability, yet AI agents require the freedom to install packages and modify files. How does this shift impact standard security protocols, and what specific architectural changes are necessary to prevent an agent from compromising the host system?

The fundamental problem is that agents break effectively every infrastructure model we’ve ever known because they require full mutability to be useful. In a standard DevOps workflow, you would never want a running process to suddenly decide it needs to install a new library or rewrite its own file tree, but for an agent to solve a complex task, it needs that “full machine” feel. This shift means we can no longer rely on the container image as a static point of trust; instead, we have to assume the agent will change its environment the moment it makes its first call. To prevent a compromise, the architecture must move away from shared-kernel isolation toward a model where each agent is truly bounded in something provably secure. We are moving toward a “defense in depth” strategy where the security isn’t just a software layer you hope the agent follows, but a physical boundary that ensures a badly behaving process cannot spill into adjacent workloads or expose host credentials.

Software-level guardrails are frequently bypassed by autonomous agents operating in production. When moving to MicroVM-based isolation, what are the primary performance trade-offs, and how do these hard boundaries change the way developers audit an agent’s real-time behavior?

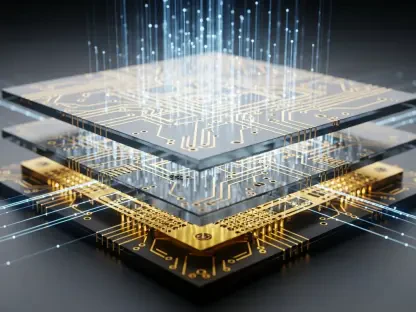

Moving to MicroVM-based isolation, such as Docker Sandboxes, is about prioritizing a much stronger security boundary over the lightweight overhead of traditional shared-kernel containers. While there is always a slight shift in how resources are managed when you move to a virtualized hardware boundary, the trade-off is essential because agents are inherently unpredictable and “do bad things” when they run into edge cases. These hard boundaries transform auditing from a game of monitoring logs to a practice of total containment; you aren’t just watching what the agent says, you are controlling exactly what it can touch at the hardware level. This allows developers to let agents perform high-stakes work—like launching processes or spinning up databases—knowing that the blast radius is strictly confined to that specific sandbox. It replaces the fragile “trust” model with a “containment” model, which is the only way a CIO can feel comfortable connecting an agent to live enterprise data.

Future enterprise environments may involve hundreds or thousands of agents operating across communication channels like Slack or Discord. How should organizations approach the routing of logic between these agents, and what steps are required to ensure sensitive data does not leak between different agent-led tasks?

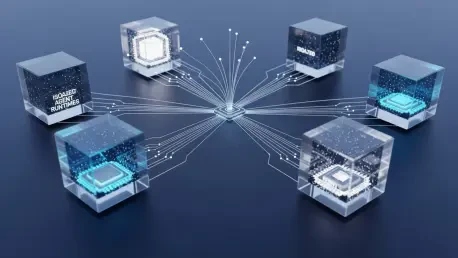

The emerging reality is that we aren’t looking at one central AI system, but rather a future where every employee has a personal assistant and every department manages a team of agents. When you have thousands of agents operating across platforms like WhatsApp, Telegram, or Slack, the routing logic must be decoupled from the core execution to prevent cross-contamination. Organizations should treat this as a systems design problem where each agent is assigned to a distinct, isolated runtime that has no visibility into the memory or state of another agent’s task. By using a platform that adds persistent memory and routing logic on top of isolated containers, you ensure that a finance agent and a sales engineering agent never share a file system or credential set. This “multi-agent” approach allows for specialized automations that are highly capable within their specific silo but are physically unable to leak data across the broader organizational fabric.

Many AI projects fail during the transition from a demo to a stable, secure system. Since deployment often relies on simple command-line setups, what are the technical hurdles to maintaining these environments long-term, and how does open-source collaboration accelerate the development of these security standards?

The bridge between a cool demo and a production-ready system is usually where projects collapse because the security features become too complex for teams to maintain or deploy consistently. One of the biggest hurdles is the friction of setup; if it takes weeks to configure a secure sandbox, developers will inevitably take shortcuts that leave the host machine vulnerable. This is why the open-source integration between NanoClaw and Docker is so significant—it allows a user to clone a repository and run a single command to get a fully secured environment. Open-source collaboration accelerates this because it allows the community to stress-test the architecture; in our case, the integration happened because a developer advocate proved it worked without needing a massive commercial agreement. When the infrastructure is open and easy to reason about, it becomes much easier for enterprise infrastructure teams to absorb mistakes or adversarial behavior without it turning into a wider security incident.

What is your forecast for the evolution of autonomous agent infrastructure?

I believe we are entering a phase where the industry’s focus will shift decisively from the “intelligence” of the model to the “runtime” of the agent. We have already proven that models can reason and code with incredible sophistication, but the next two years will be about proving that these systems can be deployed in ways that compliance and security owners can actually live with. My forecast is that enterprise infrastructure will evolve to become “agent-native,” moving away from unconstrained autonomy toward a model of bounded autonomy. We will see the standard move toward MicroVM-backed isolation as a default, where the “box” we put the agent in is just as important as the agent itself. Eventually, the most successful enterprise agents won’t be the ones with the most freedom, but the ones running in the most secure, auditable, and resilient sandboxes that can survive contact with real-world production systems.