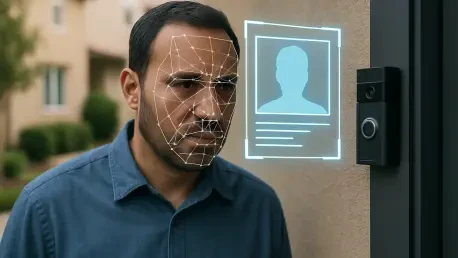

Modern residential security has transitioned from simple physical barriers to sophisticated digital ecosystems capable of identifying the specific individuals standing on a doorstep. The introduction of the “Familiar Faces” feature by Ring marks a pivotal moment in this technological evolution, where standard motion detection is replaced by advanced artificial intelligence capable of learning and cataloging human biometrics. This system allows homeowners to assign names to frequent visitors, such as family members or delivery personnel, effectively training the camera to recognize these individuals over time. By doing so, the software can significantly reduce notification fatigue by filtering out alerts for known residents while providing highly detailed descriptions of activity on the property. This shift toward intelligent recognition reflects a growing demand for convenience and hyper-specificity in smart home technology, yet it simultaneously pushes the boundaries of how much personal data a private device should be allowed to process in a residential setting.

The Evolution of Artificial Intelligence in Residential Security

The integration of facial recognition into household cameras represents a move toward proactive surveillance that goes far beyond the capabilities of legacy recording systems. Instead of merely capturing video when motion is detected, the new AI-driven framework analyzes facial features to distinguish between a stranger and a resident, creating a metadata layer over every recorded interaction. This capability is powered by complex algorithms that process biometric patterns, allowing the device to build a library of “familiar” profiles based on repeated exposure. For the consumer, this translates into a more seamless experience where the phone only chimes for unexpected visitors, but for the industry, it signifies a massive leap in data collection complexity. The sheer volume of biometric information processed daily by these devices has transformed the humble doorbell into a sophisticated data node that is constantly interpreting the physical world through a digital lens of identification.

Building on this technological foundation, the system provides a level of environmental awareness that was previously reserved for high-security commercial facilities. When a person approaches a home equipped with this feature, the AI cross-references the live feed with its stored database of recognized faces to determine the appropriate response. If a match is found, the system can be configured to ignore the event or send a low-priority notification, whereas an unrecognized face might trigger a high-priority alert or even a pre-recorded audio response. This level of customization is marketed as a way to reclaim one’s digital attention, ensuring that a parent is not interrupted by a notification every time a child returns from school. However, this functionality relies entirely on the continuous scanning and profiling of every person within the camera’s field of view, creating a persistent digital record of human movement that is inherently more intrusive than traditional video recording.

Balancing Consumer Convenience with Public Privacy Concerns

While the utility of filtered notifications is undeniable for many users, the deployment of this technology has ignited a fierce debate regarding the erosion of public anonymity. Organizations like the Electronic Frontier Foundation have voiced significant concerns about the potential for large-scale surveillance networks to emerge from these private devices. The primary fear is that Amazon, through its Ring subsidiary, could eventually aggregate this biometric data into a searchable database of human movement across neighborhoods. Unlike a smartphone that only tracks its owner, a doorbell camera records everyone who passes by, including pedestrians on public sidewalks, neighbors walking their dogs, and delivery drivers performing their duties. These individuals have not opted into being scanned or profiled, yet their biometric data is nonetheless captured and analyzed by an AI system owned by a third-party corporation, raising fundamental questions about the right to privacy in public spaces.

This tension between the homeowner’s right to secure their property and the public’s right to go about their day without being biometrically tracked is becoming a central legal challenge. Neighbors and passersby often remain unaware that their faces are being added to a digital “familiar” or “unfamiliar” list stored in the cloud. Even if a homeowner uses the tool responsibly, the existence of the data itself creates a permanent digital footprint for anyone entering the camera’s range. Critics argue that this creates a “Big Brother” environment where the cumulative effect of thousands of private cameras results in a de facto surveillance state managed by a private entity. The ethical implications are particularly sharp when considering that the subjects of this surveillance have no way to opt out of the recording, as they are simply existing in a space that happens to be within the digital reach of a neighbor’s smart home security system.

Legislative Responses and Corporate Accountability Measures

In response to the rapid proliferation of biometric surveillance, several U.S. states have begun implementing rigorous privacy laws to protect residents from unauthorized data collection. As of 2026, at least 16 states have enacted legislation that requires explicit opt-in consent before sensitive biometric information can be harvested or processed. For example, the Massachusetts Data Privacy Act is currently under scrutiny for its potential to grant citizens the right to know exactly what data is being collected and the power to opt out of its use for commercial purposes or advertising. These legal frameworks are designed to put the brakes on the unregulated expansion of facial recognition, forcing companies to be more transparent about how they handle the sensitive digital identities of the public. This legislative trend suggests that the era of “permissionless” biometric tracking is coming to an end, as lawmakers catch up to the technological realities of modern surveillance.

To navigate this tightening regulatory landscape, Ring has introduced several internal safeguards intended to reassure both users and privacy advocates. The “Familiar Faces” feature is strictly an opt-in service, meaning it is not activated by default, and biometric data is programmed for automatic deletion within a window of 30 to 180 days. Furthermore, users have the manual control to delete specific tags or recognized profiles at any time, providing a semblance of data sovereignty. The company has also explicitly stated that law enforcement agencies are not permitted to use this specific facial recognition tool to track individuals, aiming to distance itself from the controversial “Neighbors” portal controversies of previous years. Despite these concessions, many experts argue that as long as the data is stored in the cloud, the risk of data breaches or future policy shifts remains a significant threat to individual privacy.

Actionable Steps for Privacy Conscious Homeowners

The decision to implement facial recognition technology in a residential setting was a complex choice that required a careful weighing of security benefits against the potential for overreach. For those who prioritize maximum privacy while still desiring high-quality video surveillance, moving away from cloud-dependent systems toward local storage solutions was a logical next step. Security cameras that store footage on internal hard drives or local servers ensure that sensitive biometric data never leaves the home, effectively preventing service providers from accessing or analyzing the video feed. This decentralized approach allows for robust property monitoring without contributing to a centralized database of human activity. Homeowners should regularly audit their device settings to ensure that features like facial recognition are only active when truly necessary and that data retention periods are set to the minimum required for their specific security needs.

Looking ahead, the responsibility for maintaining a balance between safety and privacy fell upon both the manufacturers and the end-users who deployed these tools. It was essential for consumers to stay informed about local privacy laws and to engage in open dialogues with neighbors regarding the placement and capabilities of their cameras. Transparency within communities helped mitigate the feeling of being watched and fostered an environment of mutual respect rather than suspicion. As AI continues to become more integrated into daily life, the most effective path forward involved a combination of strict legislative oversight, corporate transparency, and informed consumer choices. By prioritizing local data processing and demanding clear opt-in protocols, the smart home industry could continue to innovate while respecting the fundamental human right to privacy in both private and public spheres.