The rapid proliferation of sophisticated algorithmic processing has fundamentally shifted the operational landscape for the modern intelligence professional, creating a unique paradox where technological speed must meet the unwavering rigidity of national security protocols. While mainstream discussions often lean toward sensationalist predictions of a fully automated workforce, the reality within the defense sector remains far more grounded in a philosophy of deliberate and incremental integration. This shift is characterized by a transition from traditional manual vetting and data processing to a sophisticated hybrid model where artificial intelligence acts as a tireless digital assistant rather than a replacement for human intellect. In this environment, the primary challenge lies in ensuring that these advanced systems can operate within the strict confines of classified networks while maintaining the absolute integrity of sensitive information. Consequently, the clearance holder of today finds themselves at a crossroads, where the ability to master these new tools is just as critical as the traditional commitment to secrecy and ethical conduct in the field.

The Strategic Integration of Artificial Intelligence

Managed Adoption: Balancing Security with Innovation

The defense and intelligence communities have historically avoided the “move fast and break things” philosophy that defines the commercial technology sector, prioritizing instead a culture of radical risk mitigation and procedural stability. This cautious approach is particularly evident in how artificial intelligence is being introduced into secure workspaces, where the stakes of a software failure or data leak extend far beyond financial loss to include genuine threats to national stability. By 2026, the adoption of these technologies has become a highly regulated process, ensuring that every algorithmic tool is scrutinized for potential vulnerabilities before it is ever deployed on a government network. This structural deliberation allows agencies to leverage the benefits of automation while ensuring that the core tenets of security clearance—trust, reliability, and accountability—are never compromised by the unpredictable nature of unvetted software. The goal is to create a seamless synergy where technology supports the mission without introducing new vectors for espionage or accidental disclosure of classified data.

This measured pace of innovation also serves as a protective barrier against the inherent biases and hallucinations that can plague commercial language models and predictive algorithms. In a national security context, an incorrect output is not merely an inconvenience; it can lead to flawed intelligence assessments or compromised operations that have tangible real-world consequences. Therefore, the implementation of AI within these agencies is focused on highly specific, task-oriented models that have been trained on curated, verified datasets rather than the broad and often unreliable information found on the public internet. This ensures that the professionals using these tools can trust the results, knowing they have been filtered through a lens of extreme accuracy and security. By maintaining this strict control over the technological lifecycle, the defense community ensures that innovation serves as a true asset to the mission rather than a liability that undermines the very foundation of the security clearance system.

Technical Constraints: Air-Gapped Systems and Data Integrity

A significant hurdle in the deployment of artificial intelligence within the classified sphere is the physical and logical isolation of high-security networks, commonly referred to as air-gapping. These systems are intentionally disconnected from the public internet to prevent external cyberattacks and unauthorized data exfiltration, which creates a complex environment for training and running modern AI models. Unlike commercial applications that rely on vast cloud-based resources, intelligence-focused AI must operate within the localized confines of these restricted networks, necessitating the development of purpose-built hardware and software. This requirement ensures that the data used to train these models—which is often highly sensitive or classified—never leaves the secure perimeter, thereby maintaining the strict confidentiality required for national defense. This isolation also prevents the use of popular, internet-connected generative tools that could inadvertently ingest and store classified prompts within their public training sets.

Furthermore, the integrity of the data being processed is paramount, requiring rigorous vetting of both the inputs and the resulting outputs of any automated system. In the secure workspace, AI is not a black box but a highly transparent tool where the lineage of information must be traceable and verifiable at every stage of the process. This focus on data provenance ensures that intelligence analysts can justify their conclusions and that the information provided by the AI is free from external manipulation or foreign influence. The infrastructure supporting these systems is designed to be resilient and auditable, allowing security officers to monitor how the AI interacts with classified files and who has access to the generated insights. This level of oversight is a non-negotiable component of the modern clearance framework, as it bridges the gap between the efficiency of high-speed computing and the absolute necessity of maintaining a secure and trustworthy information environment for all stakeholders.

Enhancing Efficiency and Professional Roles

AI as a Force Multiplier: Data Management in High-Stakes Environments

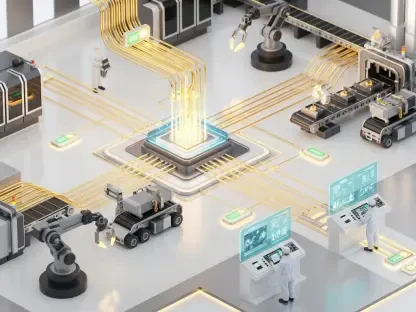

The current application of artificial intelligence in the defense sector is primarily focused on serving as a force multiplier, enabling cleared professionals to navigate the overwhelming volume of data generated by modern sensors. In fields such as geospatial analysis and signals intelligence, automated systems are now capable of performing initial data triage at speeds that far exceed human capability, identifying patterns and anomalies that might otherwise go unnoticed. This does not remove the human from the equation but rather elevates their role from a basic data processor to a high-level validator who can interpret these findings within a broader strategic context. By filtering out the noise and highlighting the most relevant information, AI allows analysts to focus their limited time and cognitive resources on the most critical aspects of the mission. This enhancement of professional capacity is essential in an era where the speed of information often dictates the success or failure of national security operations.

Moreover, the use of AI for pattern recognition has revolutionized the way intelligence agencies approach long-term monitoring of potential threats and regional instabilities. Automated tools can continuously scan massive datasets, such as satellite imagery or intercepted communications, flagging subtle changes that indicate shifts in adversary behavior or the emergence of new risks. This proactive capability allows the workforce to move from a reactive posture to a more anticipatory one, providing decision-makers with timely insights that are grounded in comprehensive data analysis. The synergy between the machine’s ability to process scale and the human’s ability to understand nuance creates a more robust intelligence product that is better suited for the complexities of the modern geopolitical landscape. As these tools continue to evolve, the expectation for clearance holders is shifting toward a mastery of these analytical platforms, ensuring they can leverage every available technological advantage to protect national interests.

The Evolution of Administrative and Cyber Tasks: From Processing to Execution

Beyond the front lines of intelligence gathering, artificial intelligence is significantly reshaping the administrative and defensive structures that support the entire security clearance ecosystem. In the realm of cybersecurity, automated systems act as a constant, vigilant first line of defense, identifying and neutralizing potential intrusions on classified networks before they can cause significant damage. These tools are capable of analyzing network traffic in real-time, detecting the signature of a cyberattack or the suspicious behavior of a potential insider threat with a precision that manual monitoring cannot match. By automating these essential defensive tasks, agencies can ensure a higher baseline of security while freeing up cyber professionals to engage in more proactive threat hunting and system hardening. This transition is vital for maintaining the integrity of the sensitive networks that house the nation’s most closely guarded secrets against increasingly sophisticated adversaries.

Simultaneously, AI is being utilized to streamline the routine back-office functions that have traditionally burdened cleared personnel, from document classification to the management of personnel security files. Automated systems can now assist in the preliminary review of security clearance applications, flagging potential areas of concern for human adjudicators to investigate further, which helps to reduce the long-standing backlogs in the vetting process. This administrative efficiency allows the workforce to shift its focus away from clerical duties and toward the actual execution of mission-critical tasks, improving overall morale and operational tempo. The professional in this new era is no longer defined by their ability to manage paperwork, but by their capacity to execute high-value missions supported by a suite of intelligent tools. This evolution reflects a broader trend within the national security sector to maximize the utility of its human capital by offloading repetitive, low-value tasks to sophisticated automated systems.

Accountability and Emerging Security Risks

The Critical Necessity of Human Judgment: Moral Reasoning and Accountability

Despite the impressive technical capabilities of modern artificial intelligence, the element of human judgment remains the absolute cornerstone of the national security enterprise and the security clearance process. AI systems, no matter how advanced, lack the inherent ability to understand the historical context, cultural nuances, or moral implications associated with high-stakes defense decisions. The consequences of these choices often involve life-or-death scenarios where a purely algorithmic output could fail to account for the complex ethical landscape of modern warfare or diplomacy. Therefore, the “human in the loop” requirement is a non-negotiable standard, ensuring that every significant action taken by an agency is vetted and authorized by a cleared professional who is legally and ethically accountable for the outcome. This accountability is what separates a reliable intelligence operation from a reckless technological experiment, grounding every decision in human responsibility.

Furthermore, the role of the clearance holder has evolved into that of an information validator, tasked with questioning and verifying the outputs generated by automated systems to ensure they are free from bias or error. A professional must possess the experience and critical thinking skills necessary to recognize when an AI might be providing a flawed assessment based on incomplete or manipulated data. This requires a deep understanding of both the mission and the technical limitations of the tools being used, creating a new standard of competency for the modern workforce. By maintaining this rigorous level of human oversight, the defense community ensures that technology serves as a support structure rather than a replacement for professional expertise. The ultimate goal is to foster a relationship where AI provides the data-driven foundation, but the human professional provides the wisdom and ethical framework necessary to act on that data in a way that aligns with national values.

Navigating the Dangers of Shadow AI: Compliance in the Era of Commercial Tools

The rise of commercially available artificial intelligence has introduced a significant new risk to the security clearance community in the form of “shadow AI,” where individuals use unapproved public tools to assist with sensitive work tasks. This phenomenon often stems from a well-intentioned desire to increase personal efficiency, but it poses a catastrophic threat to national security by exposing government data to third-party commercial entities or foreign intelligence services. When a clearance holder inputs sensitive or classified information into a public language model, that data is often stored on external servers and used to train future iterations of the tool, effectively leaking the information into the public domain. Such actions are a direct violation of security protocols and can lead to the immediate issuance of a Statement of Reasons or the total revocation of a security clearance. This danger highlights the critical need for professionals to adhere strictly to the list of approved, secure tools provided by their agencies.

Staying compliant in this rapidly changing technological environment requires a proactive commitment to understanding the legal and security implications of every tool used in the workplace. The risk to a professional’s career is no longer just about traditional espionage or criminal behavior, but also about the accidental or negligent misuse of emerging technologies that compromise data integrity. As agencies continue to deploy secure, internal versions of these AI tools, it becomes the responsibility of the individual to ensure they are operating within the authorized framework and avoiding the temptation of using faster, unvetted commercial alternatives. Success in the modern national security sector is increasingly defined by this balance of innovation and discipline, where the most effective professionals are those who can leverage authorized AI to enhance their work while remaining steadfast in their commitment to the core principles of information security. This focus on responsibility ensures that the integration of AI strengthens the nation’s defense rather than creating new vulnerabilities.

Strategic Adaptations for the Evolving Workforce

The successful integration of artificial intelligence into the national security sector was ultimately achieved through a focus on human-centric innovation and a rigorous adherence to established safety protocols. Professionals who thrived in this new environment recognized that their value shifted from simple data processing to complex validation and strategic interpretation, necessitating a continuous commitment to technical literacy. By embracing authorized tools and avoiding the pitfalls of unauthorized commercial platforms, the workforce maintained the high level of trust and accountability required for handling the nation’s most sensitive information. This evolution demonstrated that technology, when applied responsibly, serves as a powerful enhancer of human capability rather than a replacement for the nuanced judgment of experienced personnel. Moving forward, the priority remained the cultivation of a workforce that was both technologically proficient and deeply committed to the ethical standards of the defense community. Actionable strategies focused on the adoption of internal, secure AI ecosystems that prioritized data provenance and auditable outputs, ensuring that every technological advancement was matched by an equivalent increase in oversight and transparency. The past decade of progress showed that the future of security clearances was not found in the replacement of humans by machines, but in the sophisticated synergy between the two, where each played a vital role in protecting national interests.