In the rapidly evolving landscape of industrial automation, the integration of high-level artificial intelligence with physical machinery represents the next great frontier for global manufacturing. Laurent Giraid, a distinguished technologist specializing in machine learning and the ethical dimensions of automation, provides an expert perspective on Google’s recent strategic move to absorb Intrinsic into its core operations. This shift signals a transition from experimental research to a standardized industrial mandate, positioning advanced robotics as a scalable utility rather than a niche luxury. Giraid explores the implications of this “Android of robotics” philosophy, the technical synergy between vision models like Gemini and factory hardware, and how the democratization of complex coding will redefine the production economics for manufacturers worldwide.

Moving from an experimental moonshot division into a core corporate structure marks a significant strategic shift. What operational benefits does this closer alignment provide, and how does integrating specialized robotics software with existing cloud infrastructure change the way teams collaborate on large-scale AI projects?

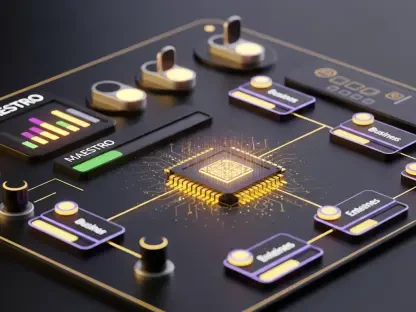

The transition from Alphabet’s X division to Google’s core structure fundamentally changes the “moonshot” status into a corporate mandate, providing the stability and resources needed for global scaling. Operationally, this allows Intrinsic to tap directly into the massive computational power of Google Cloud and the research breakthroughs of DeepMind, creating a unified tech stack that was previously fragmented. To visualize the collaboration, first, teams utilize cloud-native environments to ingest massive datasets from various sensors; second, they apply Gemini’s reasoning models to these datasets to simulate complex movements; and finally, they deploy these refined models back to the factory floor via Intrinsic’s software. This integration eliminates the friction between “the lab” and “the line,” allowing software engineers and hardware technicians to iterate on robotic behaviors in a shared, synchronized digital environment.

Industrial programming often requires hundreds of hours of manual coding by specialist engineers. How does a hardware-agnostic platform simplify this workflow for smaller manufacturers, and what specific steps are involved in deploying complex robotic applications without an army of engineers?

The burden of traditional programming is the primary reason many smaller manufacturers remain stuck in the manual era, as they simply cannot afford the thousands of lines of code required for even basic tasks. A hardware-agnostic platform like Flowstate changes this by acting as an operating layer—much like Android did for mobile phones—allowing a single application to run across different brands of robotic arms. To deploy an application, a user identifies the task through a web-based interface, selects the desired robotic hardware, and uses pre-built blocks of logic rather than writing raw code. This process can reduce the time spent on manual configuration from hundreds of hours down to a fraction of that, enabling a small team to manage a fleet of robots that would typically require a specialized engineering department.

General-purpose robots are increasingly being paired with advanced reasoning and perception models like Gemini. When vision models are integrated into manufacturing hardware, how does this improve real-time decision-making on the factory floor?

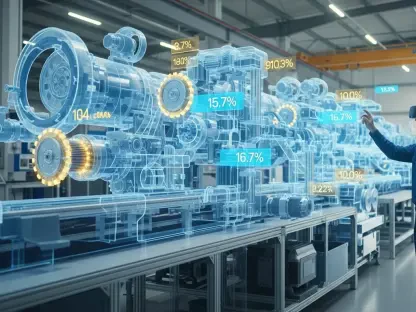

Integrating vision models like Gemini directly into manufacturing hardware gives robots “eyes” that can actually understand context rather than just following a rigid, pre-programmed path. In a dynamic factory environment, this synergy allows a robot to detect if a part is slightly misaligned or if a human worker has entered its workspace, adjusting its trajectory in milliseconds to maintain safety and precision. For example, in electronics assembly, a vision-equipped robot can identify and sort microscopic components of different shapes and sizes on the fly, a task that previously required fixed jigs and expensive custom sensors. This leap in perception means that robots can now handle “low-volume, high-mix” production cycles, where the items being manufactured change frequently throughout a single shift.

Strategic partnerships are now focusing on developing intelligent robots for electronics manufacturing and full factory automation. What are the primary technical hurdles when scaling these solutions for a global production giant, and what metrics should enterprise leaders track to evaluate the success of such high-stakes automation?

When scaling for a giant like Foxconn, the primary technical hurdle is ensuring “full factory automation” remains reliable across thousands of disparate machines in different geographical locations. You have to overcome the latency issues of cloud-to-edge communication and ensure that AI models remain performant in the messy, unpredictable reality of a high-speed production line. Enterprise leaders should move beyond simple ROI and track “Operational Transformation” metrics, specifically looking at the reduction in downtime caused by reprogramming and the speed at which a line can be repurposed for a new product. Success in these high-stakes environments is measured by the fluidity of the system—how quickly the AI learns to correct its own errors without human intervention.

The acquisition of commercial arms of open-source robotics foundations suggests a move toward a universal operating layer for machines. How does this shift affect the broader development community, and what are the practical implications for manufacturers currently relying on fragmented, proprietary hardware systems?

By acquiring the commercial arm of the Open Source Robotics Corp, the creators of ROS, Google is essentially positioning itself as the steward of the industry’s most common language. For the broader development community, this provides a centralized, well-funded roadmap for tools that were previously maintained by a fragmented non-profit structure, though it does raise questions about future proprietary lock-ins. For manufacturers, the practical implication is a much-needed escape from “vendor jail,” where they are no longer forced to use a specific software suite just because they bought a specific brand of robotic arm. This shift allows for a “plug-and-play” ecosystem where a factory can mix and match the best hardware available, knowing the underlying AI operating layer will keep everything synchronized.

What is your forecast for the industrial robotics AI market?

The industrial robotics AI market is currently on the precipice of an exponential explosion, with projections suggesting a value of roughly $370 billion by 2040 as general-purpose robots become a standard commodity. We are moving away from robots that perform a single, repetitive task toward machines that possess “Physical AI”—the ability to learn and adapt to their surroundings through observation and reasoning. I expect that within the next decade, the “Android of robotics” model will lead to a marketplace of specialized AI skills that manufacturers can download and deploy instantly. This will trigger a massive shift in global production economics, making advanced, high-speed manufacturing accessible to mid-sized companies and potentially revitalizing local production in regions that previously relied on offshore manual labor.