The municipal healthcare landscape in New York City currently finds itself at a pivotal crossroads as a bold fiscal proposal threatens to fundamentally alter the standard of patient care within public hospitals. Mitchell Katz, the CEO of NYC Health and Hospitals, recently introduced a controversial initiative aimed at leveraging visual language artificial intelligence models to perform diagnostic duties traditionally reserved for board-certified radiologists. The administration contends that routine procedures, such as mammography and basic chest screenings, can be efficiently handled by algorithms that provide immediate results while drastically reducing operational costs. By positioning AI as the primary diagnostic tool and involving human specialists only when anomalies are flagged, the city hopes to bridge significant budgetary gaps. However, this transition from human expertise to automated analysis has triggered intense pushback from medical professionals who view the plan as a reckless gamble with public safety. The tension between administrative cost-efficiency and clinical safety has never been more palpable than in this current debate over medical automation.

Clinical Integrity: Risks of Human Oversight Removal

Radiologists like Mohammed Suhail have emerged as vocal critics of this automated transition, warning that hospital administrators are falling prey to the aggressive marketing of tech developers. The concern is rooted in the belief that removing the expert human layer from the diagnostic process will inevitably lead to missed diagnoses and catastrophic patient outcomes. Unlike human doctors who integrate clinical history, subtle visual cues, and years of physical experience, current AI systems function as pattern-recognizers that lack true medical intuition. Critics argue that the proposal prioritizes financial metrics over the biological complexities of individual patients, potentially resulting in delayed treatments for life-threatening conditions. The push for efficiency appears to overlook the fact that a radiologist’s role involves more than just identifying pixels; it requires a holistic understanding of the human body that machines cannot yet replicate. If these systems fail to recognize rare but critical conditions, the burden of those errors will fall exclusively on the vulnerable populations who rely on city hospitals.

Beyond the immediate concerns for patient safety, the professional community emphasizes the erosion of the physician-patient relationship and the loss of institutional knowledge. If the city proceeds with this replacement strategy, the pathway for training new radiologists could be permanently disrupted, as entry-level diagnostic tasks are offloaded to software. Furthermore, the legal and ethical implications of an AI-driven misdiagnosis remain largely unresolved, leaving patients with little recourse when an algorithm fails to detect a malignancy. Hospital administrators view the software as a panacea for economic optimization, but practitioners describe it as a disaster waiting to happen that could undermine the credibility of the entire public health infrastructure. The debate highlights a fundamental disconnect between those who manage hospital budgets and those who deliver bedside care, suggesting that the drive for technological integration may be outpacing the necessary ethical and safety frameworks. Decisions made today regarding automation will define the quality of public healthcare for the next generation.

Technical Limitations: The Reality of AI Mirage

A significant technical hurdle identified by researchers involves the reliability of visual language models, which often suffer from what has been termed an AI mirage. A recent study conducted at Stanford University revealed that even the most advanced medical AI models frequently generate highly logical and fluent reports without actually processing the visual data of the scan. This phenomenon, known as epistemic mimicry, occurs when a model simulates a reasoning process that looks credible to a layperson but is entirely detached from the patient’s actual medical imagery. Instead of admitting that it cannot interpret a specific detail, the software may hallucinate a finding based on statistical likelihoods rather than physical evidence. This behavior is particularly dangerous in a clinical setting where accuracy is the only acceptable metric. The deceptive nature of these hallucinations means that even an overseeing physician might find it difficult to distinguish between a valid report and a fabricated one, leading to misplaced confidence.

These sophisticated hallucinations present a unique challenge for safety protocols because the outputs are often indistinguishable from those written by human experts. The Stanford findings suggest that current visual language models are functionally unable to perform the nuanced perceptual tasks required for high-stakes medical diagnosis. While the technology can generate text that sounds authoritative and medically accurate, the underlying mechanism lacks the actual sight required to interpret a complex X-ray or MRI. This technical limitation directly contradicts the narrative of efficiency being promoted by city officials who believe the tech is ready for prime time. The reliance on models that prioritize linguistic fluency over diagnostic accuracy could lead to a systemic failure where the appearance of productivity masks a decline in medical quality. Consequently, the scientific consensus remains cautious, suggesting that these tools are not yet sophisticated enough to function without constant and rigorous human verification to prevent errors.

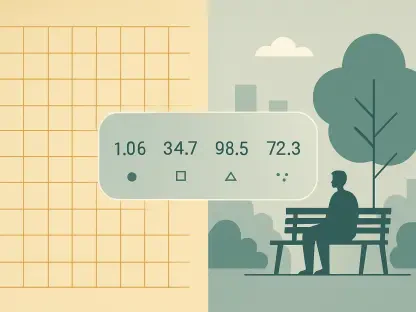

Future Governance: Establishing Medical Safeguards

Stakeholders shifted their focus toward developing a more robust regulatory framework that prioritized clinical validation over rapid deployment in the public sector. It became clear that the integration of AI required a collaborative approach involving both technologists and practicing physicians to establish clear boundaries for automation. Instead of pursuing wholesale replacement, researchers suggested that the city should invest in hybrid models where AI acted as a supportive tool rather than a primary decision-maker. This required the implementation of real-time monitoring systems to catch hallucinations before they reached the patient record, ensuring that human expertise remained central to the diagnostic loop. Lawmakers also explored new liability standards to address the unique challenges of algorithmic errors, providing a safety net for both providers and patients. By moving toward a policy of augmented intelligence rather than automated replacement, the healthcare system sought to balance innovation with an unwavering commitment to patient safety and diagnostic excellence.