The silent machinery of global commerce now pulses with a new kind of energy as autonomous AI agents begin to navigate the complex digital corridors of the world’s largest corporations. These digital entities are no longer just answering simple queries; they are attempting to interpret decades of deeply embedded business logic to make high-stakes decisions in real time. As this shift accelerates, the stability of the Enterprise Resource Planning (ERP) systems that anchor the global supply chain has come under intense scrutiny. SAP’s recently clarified API governance policy arrives as a critical intervention, seeking to harmonize the frantic pace of AI innovation with the unwavering requirement for system reliability.

Navigating the Intersection of ERP Stability and Autonomous Innovation

The transition from human-operated software to autonomous AI agents has arrived faster than most enterprise infrastructures were designed to handle. This sudden surge in automation creates a fundamental tension between the desire for total data accessibility and the absolute necessity of maintaining a stable core. For many organizations, the rush to connect large language models to their sensitive business data has inadvertently turned AI agents into accidental stress-testers. These agents can overwhelm a system to the point of failure if they are not properly governed, leading to a breakdown in the very processes they were meant to optimize.

SAP’s strategic response is rooted in the “Clean Core” philosophy, a commitment to keeping the heart of the business system free from messy, unstable customizations. By clarifying its API policy, the company is not merely updating technical documentation; it is defending the integrity of the enterprise environment. The primary challenge now lies in moving beyond simple data retrieval. Organizations must transition toward a model where AI can understand the fifty years of business logic embedded within the ERP without compromising the security or performance of the entire supply chain.

The Evolution from Traditional Cloud Management to Agentic AI

For over a decade, the enterprise cloud sector has operated under a standardized set of rules regarding rate limits and data separation, yet these controls often remained siloed within specific product lines. The current landscape necessitates a unification of these disparate policies across the entire portfolio, including platforms like SuccessFactors, Ariba, and LeanIX. This harmonization is essential for creating a predictable environment where the next generation of automation can flourish. Without a unified standard, businesses risk “operational drift,” where third-party tools rely on undocumented or unstable system layers that can break during a routine update.

The shift is largely driven by the rise of autonomous agents, which interact with backends in ways that traditional transactional interfaces never anticipated. Unlike a human user who clicks a button to perform a specific task, an agent might crawl through thousands of data points to understand a conceptual relationship. By establishing a cross-portfolio standard, the goal is to provide a stable foundation that prevents system crashes and ensures that automation remains an asset rather than a liability. This strategic alignment ensures that as companies scale their AI initiatives, the underlying infrastructure remains resilient and manageable.

Decoding the Strategic API Framework for Modern Integration

A critical distinction in this new framework exists between the “Z namespace,” where customers build their own custom code, and internal, unreleased objects like ODP-RFC. The policy ensures that while customer-developed interfaces remain fully protected, undocumented internal paths are shielded to prevent catastrophic system failures during version upgrades. This distinction is vital for maintaining the “Clean Core,” as it allows businesses to innovate within their own designated spaces without accidentally tethering their operations to unstable, internal SAP components that were never intended for external use.

Furthermore, there is a significant shift occurring from traditional transactional calls to what experts call semantic exploration. Standard APIs are designed for “request-response” flows, such as posting a goods receipt. However, AI agents attempt to learn the “semantic ontology” of a business, mapping relationships between headers, ledgers, and commitments. This mapping involves a massive volume of sequential calls that requires rigorous governance to maintain performance. Without this oversight, the sheer load of an AI trying to “understand” a business could bring even the most robust servers to a standstill.

The technical reality of the Model Context Protocol (MCP) also plays a major role in this discussion. While MCP has become a popular emerging standard for connecting models to tools, it often functions as simple “plumbing” without any inherent business context. Without governed layers, a naive implementation of MCP can lead to massive inefficiencies. Production data suggests that context-aware implementations are up to seven times more cost-effective than naive protocol attempts. Governance ensures that AI interactions are optimized for both speed and financial viability, preventing a situation where automation costs more in compute tokens than it saves in human labor.

Perspectives on Enterprise Security and Supply Chain Integrity

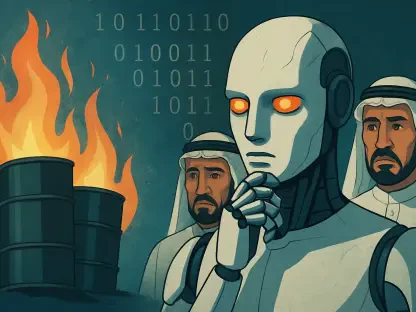

The Head of the Office of the CTO at SAP, Anirban Majumdar, characterizes the current approach as “governance, not gatekeeping,” emphasizing that security is the primary driver of these restrictions. The enterprise world is currently facing sophisticated threats, such as the “Mini Shai-Hulud” supply chain attack, which demonstrated how vulnerable unmanaged community-built servers can be. When these ungoverned tools are granted access to a productive ERP environment, they create a backdoor for malicious actors to exploit sensitive financial and human resources data.

Research into the OWASP MCP Top 10 reveals critical vulnerabilities, including tool poisoning, prompt injection, and privilege escalation. Expert consensus suggests that connecting a company’s most sensitive data to open-source protocols without a sanctioned, enterprise-grade gateway creates an unacceptable risk profile. In this high-stakes environment, the lack of a governed path is not just a technical oversight; it is a direct threat to the integrity of the global supply chain. By enforcing these API standards, the aim is to ensure that every autonomous action is traceable and permitted under existing corporate security models.

Establishing a Governed Path for Third-Party AI Connectivity

To move forward, organizations are being encouraged to look toward the Linux Foundation’s Agent2Agent (A2A) protocol. This protocol allows different AI systems, such as SAP Joule and Microsoft 365 Copilot, to interoperate seamlessly without bypassing established security models. Instead of relying on ad-hoc connections that could expose vulnerabilities, developers are urged to implement “AI Golden Paths”—co-engineered architectures that have been validated for both security and performance. These paths provide a blueprint for innovation that does not sacrifice the safety of the core system.

Practical implementation also requires a deeper focus on metadata and context layers. Integration should utilize sophisticated tools like the SAP Knowledge Graph and Open Resource Discovery to provide agents with the necessary metadata to understand business logic effectively. Furthermore, for teams using common frameworks like CAP, UI5, or ABAP, the move toward SAP-sanctioned MCP servers provides a way to use familiar protocols within a secure, managed environment. Prioritizing identity and trust workstreams will be essential, ensuring that every digital agent has a clear identity and that its permissions are strictly managed.

In light of these developments, the focus of enterprise leaders shifted toward the long-term sustainability of their digital ecosystems. It became clear that the rapid deployment of AI could not happen in a vacuum, divorced from the rigorous standards of traditional software engineering. Organizations began to prioritize the development of “Agent Identity” frameworks, ensuring that every autonomous action was as auditable as a manual entry. This shift toward a more disciplined integration model allowed businesses to capture the efficiency of AI while reinforcing the walls of their most valuable data assets. The resulting balance between openness and security provided a new blueprint for how heavy industry and global finance could safely embrace the agentic future.