The field of optoelectronics is currently witnessing a seismic shift as we move away from traditional silicon-based architectures toward purely light-based processing. Leading this charge is Laurent Giraid, a technologist whose work at the intersection of Artificial Intelligence and photonic hardware is redefining how machines “think” in real-time. By utilizing light pulses to mimic the firing of biological neurons, Giraid and his peers are developing systems that operate at speeds far beyond the capabilities of conventional GPUs. In this discussion, we explore the breakthrough of a two-chip photonic neuromorphic system designed to handle the complexities of reinforcement learning without the lag of electronic conversion. We delve into how these high-speed chips manage intricate control tasks like balancing pendulums and what it means for the future of autonomous navigation and embodied intelligence.

How do you integrate the 16-channel Mach-Zehnder interferometer mesh with the laser array to keep computation entirely in the optical domain? What specific calibration steps are required to manage 272 trainable parameters while avoiding the delays typically caused by converting signals back into electronic formats?

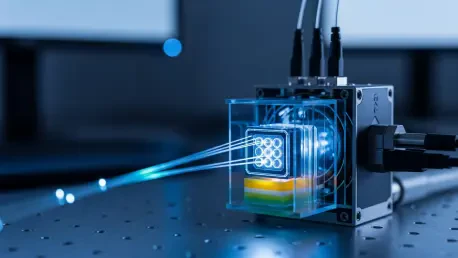

The integration process is really about creating a seamless bridge between linear weight processing and nonlinear neuron firing, all while staying within the photonic realm. We utilize a 16×16 Mach-Zehnder interferometer (MZI) mesh chip that is specifically tailored to handle the high-speed requirements of spiking neural networks. To make this work without falling back on electronics, we pair this mesh with a second chip containing a distributed feedback laser array equipped with saturable absorbers. This second chip is the key to achieving low-threshold nonlinear spiking activation, essentially acting as the “neuron” that decides when to fire based on the light it receives.

Managing the 272 trainable parameters is a delicate balancing act that requires a sophisticated calibration framework. Because we are dealing with physical light paths, even tiny variations in temperature or fabrication can shift how the system learns. We address this by using a hardware-software collaborative approach where we first map out the desired network behavior and then fine-tune those 272 parameters directly on the chip. By doing this, we bypass the traditional “optical-to-electronic-to-optical” conversion cycle, which has historically been the primary source of latency. It’s a rewarding feeling to see those optical signals zip through the mesh at the speed of light, knowing that the decision-making is happening entirely within the photons themselves, rather than waiting for a slow electronic processor to catch up.

When tackling real-time control tasks like the CartPole or Pendulum, how does the system maintain accuracy within 2% of software-only models? Could you walk us through the hardware-software collaborative framework used to fine-tune the network and account for physical chip-level variations?

Maintaining such a high degree of accuracy—specifically dropping only 1.5% for the CartPole task and 2% for the more erratic Pendulum swing—is a testament to our three-stage training pipeline. We start with a global pre-training phase in a software environment where the model learns the basic physics of the task. Once the foundation is laid, we move to “in-situ” training on the MZI mesh chip itself to let the network adapt to the specific physical characteristics of the hardware. Finally, we implement a software fine-tuning stage that compensates for any lingering chip-level variations or “noise” inherent in photonic components.

In the CartPole experiment, the system had to learn through trial and error how to balance a pole on a moving cart, which is a classic benchmark for rapid decision-making. We saw the hardware decisions mirror the software-trained logic almost perfectly in real-time. For the Pendulum task, which is significantly more complex because it involves swinging a weight from a hanging position to a balanced upright state, the system still delivered a reliable performance. This collaborative framework ensures that we aren’t just building a theoretical model, but a functional piece of hardware that can survive the imperfections of the real world. Seeing the system achieve “perfect” performance on the CartPole task in a physical testbed was a clear indication that photonic reinforcement learning is no longer just a laboratory curiosity but a viable path for embodied intelligence.

With on-chip computing latency reaching just 320 picoseconds, what specific architectural choices allow for energy efficiency comparable to high-end GPUs? How do these metrics influence the design of larger-scale systems, such as 128-channel chips, for more complex robotic environments?

The latency of 320 picoseconds is truly staggering—we are talking about 320 trillionths of a second to complete an on-chip computation. To put that in perspective, current electronic architectures often struggle with delays that are orders of magnitude higher due to the time it takes for electrons to move through copper and for signals to be buffered. Our architectural choice to use an incoherent photonic neuromorphic system allows us to reach an energy efficiency of 1.39 tera operations per second per watt (TOPS/W) for linear tasks. This places our photonic chips firmly in the same class as high-end GPUs, which typically hover around the 1 TOPS/W mark.

When you look at computing density, we are hitting 0.13 TOPS/mm² for linear operations and over 533 giga operations per second per watt (GOPS/W) for nonlinear activations. These numbers are crucial because they prove that we can maintain GPU-level power while shrinking the physical footprint and drastically reducing heat. As we look toward the future, these metrics are the blueprint for our upcoming 128-channel chips. Scaling up to 128 channels will allow us to handle the massive data streams required for autonomous navigation in unpredictable environments. By maintaining this 320-picosecond latency while increasing the channel count, we can create a system capable of “feeling” and reacting to its surroundings almost instantaneously, which is a prerequisite for any robot operating alongside humans.

All-optical nonlinear activation has historically been a bottleneck for spiking neural networks. How does the integration of saturable absorbers within a laser array solve this, and what are the functional trade-offs when scaling these neuron arrays for use in autonomous driving technologies?

You’ve hit on the most significant hurdle in the field: the “linear-only” limitation of light. Traditionally, photons pass through each other without interacting, which is great for moving data but terrible for the decision-making logic that requires a non-linear “trigger.” By integrating saturable absorbers into our distributed feedback laser array, we’ve created a low-threshold nonlinear switch. When the intensity of the incoming light reaches a certain point, the absorber saturates, allowing a pulse—or a “spike”—to be emitted. This mimics the way a biological neuron reaches a threshold and fires, and it does so without needing to convert the light into electricity.

However, scaling these arrays for something as demanding as autonomous driving does come with trade-offs. While we gain immense speed and energy savings, the complexity of managing a compact, hybrid-integrated large-scale chip increases exponentially. We have to ensure that as we move from 16 channels to 128 or more, the thermal stability of the laser array remains rock-solid and the “thresholds” for firing remain consistent across the entire chip. There is also the challenge of edge computing—making sure these chips are rugged enough and compact enough to fit into a vehicle’s sensor suite. We are currently working on a fully functional, large-scale photonic spiking neural network that can solve these complex navigation tasks, but the ultimate goal is to move from these experimental “benchtop” setups to a single, robust hybrid package.

What is your forecast for photonic spiking neural systems?

I believe we are on the cusp of a total transformation where photonic spiking systems become the “brain” for edge devices that require zero-latency responses. In the next five to ten years, I expect we will see these chips integrated directly into autonomous vehicles and robotic limbs, allowing them to process environmental data at speeds that make current AI feel sluggish. We will move away from central cloud processing for real-time tasks, instead relying on these low-power, light-speed chips to make split-second survival decisions. As we perfect the 128-channel architectures and improve our hybrid integration techniques, the energy barriers that currently limit AI growth will begin to crumble, ushering in an era of truly “embodied intelligence” where machines learn and react as fluidly as biological organisms.