As a researcher at the forefront of the School of Science Dean’s Postdoctoral Fellowship at MIT, Sergei Kotelnikov bridges the gap between the rigid laws of physics and the complex, often chaotic world of biology. With a foundation in applied mathematics and physics from the Moscow Institute of Physics and Technology, he has spent years decoding how simple organic molecules transitioned from primordial chemistry into the “magic” of living organisms. His work focuses on the architecture of life—specifically protein structures—and how their interactions can be predicted to fight diseases once thought undruggable. By blending machine learning with geometric deep learning and classical physics, Kotelnikov is developing the next generation of computational tools to solve the mysteries of molecular assembly.

The following discussion explores the emergence of biological complexity from statistical physics, the mathematical hurdles of modeling synthetic molecules like PROTACs, and the vital synergy between digital simulations and wet lab experimentation.

Transitioning from physics and applied mathematics to computational biology requires a shift in perspective regarding complexity. How does a background in statistical physics help you understand the emergence of life from simple organic compounds, and what specific mental frameworks from physics do you apply to biological modeling?

Statistical physics provides a unique lens because it focuses on how macroscopic properties emerge from microscopic interactions, which is exactly what happens when simple organic compounds assemble into a living cell. In my view, life is a spectacular manifestation of evolution sharpening physical phenomena over billions of years. When I look at a protein, I don’t just see a biological entity; I see a system governed by the laws of quantum mechanics and statistical thermodynamics that somehow gives rise to mystery at a larger scale. I apply the framework of “emergence of complexity,” where the whole becomes significantly greater than the sum of its parts, allowing me to write formulas for molecular behavior while respecting the biological “magic” that follows. This mathematical rigor allows me to treat biological systems not as random occurrences, but as predictable, albeit highly complex, physical systems.

Proteins function as the fundamental architecture of an organism, yet slight variations in how they fold or connect can lead to significant diseases. What are the primary challenges in simulating these irregular configurations, and how do these models directly inform the development of targeted medical interventions?

The primary challenge lies in the fact that proteins are like the bricks of a building, but when they are folded, curled, or twisted in unusual ways, the entire structure of the organism suffers. Simulating these irregular configurations is difficult because we have very limited experimental data on how these specific “broken” shapes interact with other cellular components. My models act as a digital microscope, allowing us to break down the “Lego tower” of a disease and examine each individual piece to find the exact culprit behind the malfunction. By predicting these shapes through simulation, we can identify targets for drug discovery, helping us correct the pairing of proteins before we ever step into a physical laboratory. This saves immense amounts of time and resources by narrowing down which interventions are most likely to successfully restore a protein’s natural function.

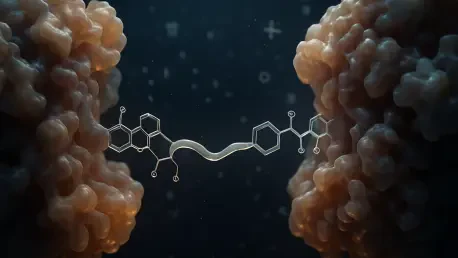

Modeling PROTAC molecules is difficult because they force interactions between proteins that do not naturally pair, often using a flexible linker. Could you explain the step-by-step process of using algorithms like the Fast Fourier Transform to make these computations tractable, and what trade-offs occur when modeling those flexible connections?

Modeling PROTACs is essentially like trying to guess the orientation of two irregular, unmatched Lego pieces joined by a bendy, flexible connector. To handle this, we use a bit of “applied math judo” where we conceptually cut the flexible linker into two separate halves and model the behavior of each half independently. We then use the Fast Fourier Transform (FFT) algorithm to rapidly calculate all possible configurations where these two halves could meet in 3D space, which turns an otherwise impossible computational task into something manageable. The trade-off is that we must simplify certain aspects of the linker’s flexibility to prevent the number of calculations from exploding, but this is a necessary compromise to achieve a high-performing model. This method has already proven its worth by allowing us to predict complex structures that were previously considered “undruggable” in the context of cancer research.

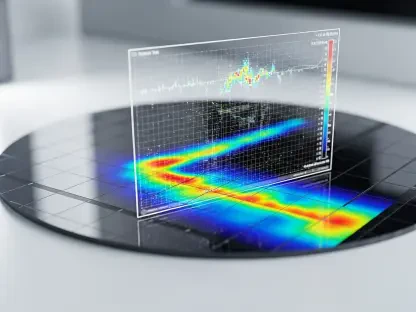

Traditional machine learning often requires massive datasets, which are not always available in experimental biology. How does integrating physical and geometric knowledge into neural network architectures improve the generalizability of your models, and what are the practical implications for fields like cancer immunology or agriculture?

In biology, we often suffer from a scarcity of data, so we cannot rely on the “brute force” machine learning methods used in other industries. By integrating geometric deep learning—which embeds physical laws and molecular geometry directly into the neural network—we ensure the model already understands the basic rules of how atoms can and cannot move. This approach significantly reduces the amount of training data required because the AI doesn’t have to “relearn” gravity or chemical bonding from scratch. For fields like cancer immunology or crop protection, this means we can develop highly accurate models even when experimental samples are rare or difficult to obtain. It allows us to move from just observing data to actually understanding the underlying principles that govern protein interactions in diverse environments.

Developing computational models can sometimes lead to a disconnect from physical reality. How do you structure your collaboration with wet lab researchers to test your predictions, and what specific benefits arise when computational biologists and experimentalists work together on the same protein structures?

The disconnect from reality is a constant risk when you spend your time in an “imaginary world” of assumptions and mathematical simplifications. At MIT, I collaborate closely with experimentalists who perform the actual wet lab tests that serve as a reality check for my digital predictions. We work in a symbiotic loop: I provide them with the most likely protein configurations to investigate, and they provide me with the hard data that proves or disproves my model’s accuracy. This collaboration is invaluable because it grounds the mathematics in physical truth and ensures that the models we build have a real-world impact on disease research. Having an experimentalist “across the hall” allows us to iterate much faster, turning a theoretical prediction into a verified biological discovery in a fraction of the time.

Approaching biological systems as modular assemblies—much like building with interlocking blocks—allows for a unique creative freedom. How has the habit of building without instructions influenced your approach to scientific problem-solving, and how do you decide which biological mysteries are currently ripe for computational breakdown?

My childhood spent playing with Lego bricks, where I preferred building my own designs over following the instructions, defined my entire scientific philosophy. It taught me that you can build anything if you understand how the pieces fit together, a mindset I now apply to the modular assemblies of proteins. I decide which mysteries to tackle by looking for areas where the “instructions” are missing—where traditional biology hasn’t yet explained a complex interaction—but where we have enough physical clues to start building a model. This sense of creative freedom allows me to approach problems like PROTAC modeling with a fresh perspective, looking for mathematical shortcuts like FFT rather than following established but inefficient protocols. I am naturally drawn to the “candy shop” of unsolved problems where the expansion of our understanding feels most satisfying.

What is your forecast for the field of computational protein modeling?

I believe we are entering an era where the distinction between “computational” and “experimental” biology will almost entirely disappear as our models become indistinguishable from physical observation. Over the next decade, we will likely see a shift toward truly autonomous drug discovery, where AI-driven systems not only predict protein structures but also design the synthetic molecules to manipulate them with near-perfect precision. We will move beyond just static snapshots of proteins and begin to model the full, dynamic “movie” of cellular life in real-time, allowing us to stop diseases at the very moment they begin to unfold. For the readers, this means the future of medicine will be increasingly personalized and preventative, driven by the digital blueprints we are drawing today at the intersection of physics and machine learning.