The traditional expectation that a software engineer must anticipate every possible user interaction during the design phase is rapidly becoming an obsolete constraint in the modern enterprise landscape. As artificial intelligence transitions from simple chat interfaces to autonomous agents capable of complex reasoning, the static nature of modern front-end development has emerged as a significant bottleneck. This review examines the shift toward Agentic User Interfaces, a paradigm where the digital environment is no longer a fixed set of screens but a fluid, generative experience that adapts to the specific intent of a machine-led workflow.

The Evolution of Agentic Interfaces and A2UI Frameworks

The emergence of Agentic AI marks a departure from the “chatbot” era toward a model of autonomous productivity. In previous iterations, AI was confined to a text box, providing information that a human then had to manually input into various enterprise systems. Today, the focus has shifted toward the Agent-to-User Interface (A2UI) framework, which allows an agent to bridge the gap between back-end logic and human interaction by constructing its own interface components on the fly.

This evolution is fundamentally rooted in the need for agility within large-scale organizations. While traditional UI development cycles could take weeks to implement a single new workflow, A2UI allows an agent to interpret a business requirement and present the necessary interactive elements immediately. This shift is not merely about aesthetic flexibility; it represents a fundamental change in how software is consumed, moving from a “tool-centric” approach to an “intent-centric” one where the interface serves the agent’s current objective.

Core Architectural Components of Agentic Design

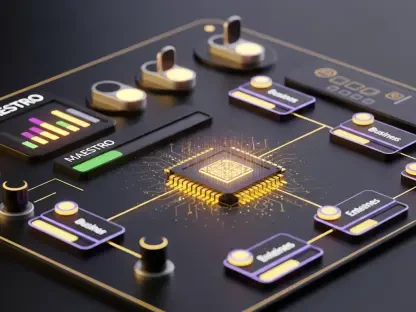

The Agent-to-User Interface (A2UI) Pattern

At the heart of this technological shift is the A2UI pattern, which functions as a translation layer between an AI’s cognitive output and a functional web component. Instead of an agent simply outputting text, it generates structured instructions that a specialized renderer interprets to create forms, tables, or interactive dashboards. This allows for a “just-in-time” interface that exists only for the duration of the task, significantly reducing the cognitive load on the user who no longer needs to navigate complex menu trees.

The performance of this pattern relies on the decoupling of the “what” from the “how.” The agent decides what data is needed—such as a specific set of financial fields—while the A2UI renderer determines how to display it based on a library of pre-approved, accessible components. This ensures that even though the interface is generated dynamically, it remains compliant with brand standards and security protocols, providing a seamless experience that feels native to the enterprise ecosystem.

Token Object Notation (TOON) and Data Compression

One of the most innovative technical hurdles addressed in this review is the “context window” problem. Large Language Models (LLMs) are often restricted by the amount of data they can process at once. Token Object Notation (TOON) has emerged as a critical compression standard that allows complex business ontologies to be fed into an agent without exhausting its memory. By using shorthand notation for repetitive structures, TOON ensures that the agent understands the underlying business logic without getting bogged down by verbose code.

This compression is vital for real-world usage because it allows agents to reference massive frameworks, such as the Financial Industry Business Ontology (FIBO), in real-time. By shrinking the data footprint of the UI instructions, developers can include more nuanced “guardrails” within the prompt. Consequently, the agent remains grounded in factual business rules while maintaining the speed necessary for a responsive user experience, making the interaction feel snappy rather than sluggish.

Dynamic Rendering and AG-UI Standards

The stability of these dynamic systems is maintained through AG-UI standards, which dictate how loosely coupled schemas interact with a front-end shell. This standard ensures that when an agent generates a button or a slider, the “state” of that interaction is correctly communicated back to the AI. For instance, if a user adjusts a loan amount on a dynamically rendered slider, the AG-UI protocol ensures the agent perceives that change and updates its reasoning accordingly.

This bidirectional communication is what separates a truly agentic UI from a simple template. By maintaining a constant loop of interaction and interpretation, the system creates a “living” document. The agent is not just showing a screen; it is monitoring how the human interacts with that screen to refine its next set of actions. This creates a high-level consistency that was previously impossible in generative systems, where the “hallucination” of UI elements often led to broken links or non-functional buttons.

Emerging Trends in AI-Driven Front-End Development

The current trajectory of the industry suggests a move toward “zero-code” front-ends where the primary role of a developer is to curate a library of atomic components. Rather than building pages, teams are now focusing on building the “vocabulary” that an agent uses to express itself. Moreover, as models become more adept at understanding visual hierarchy, we are seeing a shift where agents can self-correct UI layouts based on user frustration or accessibility needs detected through interaction patterns.

Real-World Applications and Industrial Deployment

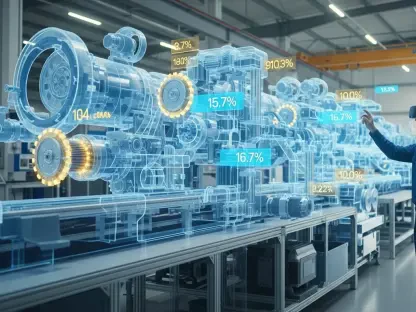

In the financial sector, these patterns are already transforming how complex regulatory filings are handled. An agent can ingest a new compliance mandate, identify the missing data points in a client’s profile, and generate a custom onboarding form specifically tailored to gather that information. This removes the need for a manual update to the core banking software. Similarly, in healthcare, agentic UIs are being used to synthesize patient data into interactive summaries that allow doctors to manipulate variables and see potential treatment outcomes in real-time.

Technical Hurdles and Adoption Barriers

Despite the promise, significant barriers remain, particularly regarding the predictability of generated interfaces. Many organizations are hesitant to allow an AI to “decide” what a user sees, fearing that a lack of visual consistency could lead to user error or security vulnerabilities. Furthermore, the latency involved in generating complex schemas can sometimes exceed the patience of a human user. Current efforts are focused on pre-caching common UI patterns to mitigate these delays and ensure a more stable experience.

Future Outlook and the Shift in Design Roles

The future of this technology points toward a “single pane of glass” philosophy where the traditional browser disappears in favor of an ambient agentic layer. Design roles will likely move away from pixel-pushing and toward “logic orchestration,” where the primary task is defining the rules and constraints within which an agent operates. This transition will require a new generation of designers who are as comfortable with JSON schemas and ontologies as they are with color theory and typography.

Conclusion and Assessment of Agentic UI Patterns

The transition toward Agentic User Interface patterns represented a fundamental rethinking of how humans and machines collaborate within professional environments. By moving away from rigid, pre-defined screens and toward dynamic, intent-driven rendering, organizations gained the ability to respond to market shifts with unprecedented speed. The implementation of frameworks like A2UI and TOON proved that technical limitations regarding context and state management could be overcome through intelligent architectural choices.

Moving forward, the focus should shift toward establishing more robust cross-industry standards to ensure that agentic systems remain interoperable and secure. Organizations that adopted these patterns early found themselves better positioned to leverage the full cognitive potential of AI, turning the user interface from a static barrier into a strategic asset. The next phase of development will likely involve refining the emotional intelligence of these interfaces, ensuring they not only serve functional needs but also adapt to the specific workflow habits of individual users.