The fleeting nature of modern digital interactions is fundamentally changing as the industry pivots from disposable chat sessions toward a new standard of cognitive endurance. For years, the primary limitation of large language models involved a persistent “amnesia” that forced users to re-establish context every time a new window opened. This fragmented experience slowed productivity and prevented artificial intelligence from becoming a true partner in long-term projects. However, the recent open-source release of the Always On Memory Agent by Google signals a decisive break from this session-based past. By providing a framework for agents that remember and evolve, the tech giant is setting the stage for a future where digital assistants maintain a continuous, growing understanding of their specific operational environment.

This project, spearheaded by Shubham Saboo and hosted on the Google Cloud Platform GitHub repository, arrives at a critical juncture in the 2026 AI landscape. The move to release this under a permissive MIT License suggests a strategic effort to democratize persistent memory, allowing commercial developers to integrate these capabilities without the restrictive overhead of proprietary silos. The nut graph of this development is simple: as the cost of intelligence continues to drop, the value of an agent no longer lies solely in its reasoning capability, but in its ability to manage its own cognitive history. This transition marks the beginning of an era where agents function less like search engines and more like seasoned colleagues who possess a deep, cumulative knowledge of a company’s unique workflows and history.

Moving Beyond the Chatbox: The Rise of Persistent AI

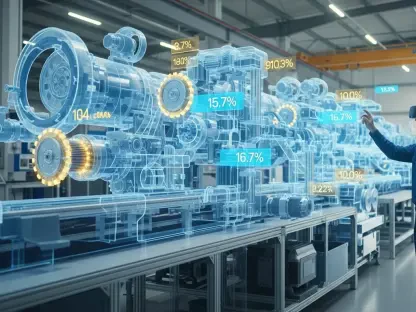

The era of “forgetful” AI is drawing to a close as the focus of development shifts from isolated chat sessions to autonomous, long-term persistence. While traditional models treat every interaction as a fresh start, the Always On Memory Agent introduces a system designed to remember, learn, and evolve over time. This shift is necessary because the industry has reached the limits of what can be achieved through clever prompting alone. Modern workflows require agents that can track a project from its initial brainstorming phase through to final execution, maintaining a coherent narrative across weeks or even months of background processing.

By open-sourcing this agent, Google is offering developers a blueprint for systems that function as long-term digital collaborators. These agents do not merely wait for a user to type a query; they inhabit a continuous state of awareness. They are designed to operate in the background, observing data streams and updating their internal understanding of the world without constant human intervention. This persistent state allows the AI to develop a more nuanced “personality” and a more accurate “memory” of user preferences, which significantly reduces the friction typically associated with onboarding an automated assistant into a complex professional environment.

The Evolution of AI Memory and Contextual Awareness

The push for persistent memory is driven by the limitations of current AI architectures, which often struggle to maintain context across multiple days or projects. Many developers have encountered the “RAG Bottleneck,” where retrieval-augmented generation systems become bogged down by the sheer volume of unstructured data they must index and query. This traditional approach often results in fragmented retrieval, where the AI finds specific facts but misses the broader context of how those facts relate to a long-term goal. Consequently, the industry is moving toward model-centric memory management, where the LLM itself takes a more active role in determining what is worth remembering and how that information should be synthesized.

The shift from session-based interactions to autonomous persistence represents a fundamental change in the digital labor model. Instead of an assistant that starts with a blank slate every morning, the Always On Memory Agent maintains a continuous thread of thought. This evolution is accelerated by the role of the MIT License, which lowers the barrier for startups and established enterprises to experiment with these persistent architectures. By removing the intellectual property hurdles, the ecosystem can rapidly iterate on memory management strategies, leading to faster commercial adaptation and a more competitive market for specialized agents that cater to niche industries such as legal discovery or medical research.

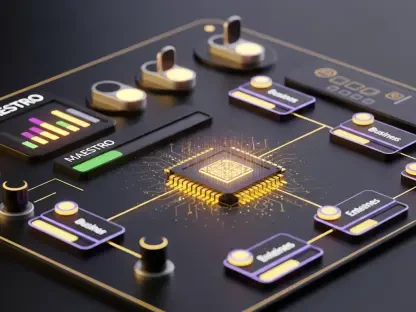

Technical Pillars: ADK and the Efficiency of Gemini 3.1 Flash-Lite

This project leverages a sophisticated tech stack designed to balance high-level reasoning with the economic realities of background processing. At its core, the system utilizes the Agent Development Kit (ADK), a framework designed for model-agnostic deployment. The ADK allows developers to build agents that are not tied to a single infrastructure, making it easier to scale persistent workflows across different cloud environments or local setups. This flexibility is essential for businesses that need to manage data residency requirements while still taking advantage of the latest breakthroughs in model performance and efficiency.

Gemini 3.1 Flash-Lite serves as the engine for continuous memory ingestion, providing the ideal balance of speed and cost. Performance metrics for this model are particularly impressive, with a reported $0.25 per million input tokens and $1.50 per million output tokens, making “always on” operation financially viable for the first time. The model is 2.5 times faster than its predecessors in time-to-first-token measurements, ensuring that the background consolidation of memory does not lag behind real-time data ingestion. Furthermore, the model’s intelligence is validated by high scores on benchmarks such as the GPQA Diamond and MMMU Pro, indicating that it possesses the reasoning depth required to synthesize complex, multi-modal information into useful long-term memories.

Architectural Innovation: Replacing Vector Databases with LLM Reasoning

In a departure from industry standards, this agent ditches traditional vector databases in favor of a “read, think, and write” cycle. While vector databases rely on mathematical embeddings and similarity searches, this agent acts as its own librarian using a structured SQLite backend. This approach moves away from the complexity of maintaining massive indices and instead relies on the model’s ability to categorize and store information in a human-readable, structured format. By using SQL, the agent can perform more precise queries and maintain a clearer record of its internal knowledge, which simplifies the debugging process for developers who need to understand why an agent “remembers” a particular piece of information.

The system employs a multi-agent structure to handle the ingestion of various data types, including video, audio, and PDFs. These sub-agents act as specialized processors that feed raw data into the central memory store. Every 30 minutes, a consolidation cycle triggers, during which the agent reviews its recent inputs, synthesizes them, and cleans its own memory. This process mimics human sleep-based memory consolidation, where the AI filters out noise and strengthens the connections between related concepts. This architectural innovation significantly reduces the “hallucination surface” by ensuring that the agent’s memory is constantly being audited and reorganized by the model’s own reasoning capabilities.

Enterprise Challenges: Governance, Hallucinations, and Safety

While the “no-database” approach simplifies development, it introduces significant questions regarding how businesses can control an agent that “thinks” in the background. The risk of “dreaming”—where the agent creates false associations during the memory consolidation process—remains a primary concern for enterprise deployment. Without strict deterministic boundaries, a persistent agent might inadvertently combine unrelated facts into a misleading narrative. Managing “drift and loops” in long-term recursive memory processing is another hurdle, as the agent may become overly focused on its own synthesized conclusions rather than staying grounded in the original source data.

Security considerations for non-session-bound data retention are equally critical. When an agent possesses a long-term memory, it becomes a high-value target for data breaches, necessitating a more robust framework for auditing and provenance. Enterprises require industrial-strength features that define clear policy boundaries for what the agent is allowed to remember and for how long. The lack of standardized protocols for “forgetting” sensitive information poses a legal risk, especially in jurisdictions with strict data privacy laws. Consequently, developers must wrap these autonomous memory systems in additional layers of governance to ensure that the AI remains a safe and compliant tool within the corporate structure.

Practical Implementation: Building and Scaling Persistent Workflows

Developers looking to implement this architecture must weigh the benefits of a lighter stack against the requirements of large-scale data environments. For many applications, deploying the agent via Streamlit and local HTTP APIs provides a fast and accessible path to production. However, as the volume of data grows, the tradeoff between LLM-driven reasoning and explicit indexing becomes more apparent. While the current model excels at managing structured SQLite memory, extremely large datasets may still require a hybrid approach that combines the reasoning power of the LLM with the raw efficiency of traditional RAG systems to maintain low latency.

The transition to persistent AI demanded that engineers rethink the very foundation of how software interacts with human knowledge. Developers recognized that the Always On Memory Agent provided the necessary scaffolding to move beyond the limitations of transient sessions. By implementing a system where memory was a continuous, managed resource, teams successfully reduced the cognitive load on human users. In the end, the project demonstrated that a model-centric approach to memory, when combined with efficient hardware and smart consolidation cycles, offered a viable path forward. The industry learned that the next frontier of artificial intelligence was not just about better reasoning, but about building a digital history that was as reliable and enduring as a human’s own. This shift encouraged businesses to adopt more flexible frameworks for wrapping autonomous agents in enterprise-grade compliance guardrails, ensuring that the technology remained both powerful and predictable.