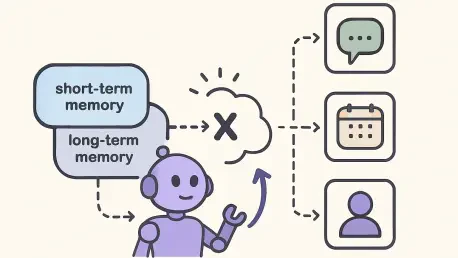

The rapid transition from isolated, single-turn chatbot interactions to sophisticated, persistent digital assistants has fundamentally changed the expectations for Artificial Intelligence performance. While early models were celebrated for their ability to answer immediate questions, today’s users demand agents that can recall past preferences, maintain long-term project contexts, and exhibit a sense of continuity over weeks or even months of engagement. However, as these digital companions become more integrated into daily workflows, they encounter a significant technical barrier known as the memory management bottleneck. This issue arises because standard Large Language Models do not possess an innate “biological” memory; instead, they rely on external systems to feed them relevant snippets of past conversations. When these systems are poorly optimized, the resulting experience is often marred by high costs, slow response times, and a frustrating lack of precision that undermines the very utility of the AI.

The Limitation of Traditional RAG in Dialogue

Identifying the Failure Points of Flat Retrieval

The industry has largely relied on Retrieval-Augmented Generation, or RAG, to provide AI models with access to external information, yet this method frequently falters when applied to the unique structure of human conversation. Most RAG systems operate on a flat retrieval architecture, where data is treated as a collection of independent documents stored in a vector database. In a traditional corporate setting, such as searching through a thousand-page technical manual, this works well because the individual sections of the manual are usually distinct and well-defined. However, human dialogue is “temporally entangled,” meaning that ideas are not isolated but are instead spread across multiple messages, often repeating and evolving over time. When an AI agent attempts to use standard RAG to recall a conversation, it often retrieves dozens of nearly identical snippets because the user discussed the same topic multiple times. This results in a massive influx of redundant data that clogs the model’s limited processing space.

This redundancy creates a phenomenon known as context bloat, which directly impacts the economic and functional viability of AI deployments. When an agent is forced to ingest thousands of unnecessary tokens—essentially the “syllables” of AI processing—the cost per query begins to spiral upward without providing any additional value to the end user. Beyond the financial implications, there is a clear cognitive tax on the model itself; Large Language Models are prone to “losing the thread” when their context windows are filled with noisy, repetitive information. If a system retrieves five different versions of a user explaining their dietary preferences but misses a single, unique mention of a specific allergy located elsewhere in the history, the agent may provide a dangerous or incorrect response. The inability of flat RAG to distinguish between a “new fact” and a “repeated sentiment” remains one of the most pressing hurdles for developers aiming to build reliable, high-stakes AI assistants in the current technological landscape.

Resolving Semantic Overlap and Redundancy

To move past these limitations, it is necessary to reconsider how conversational data is indexed and prepared for retrieval before it ever reaches the generative model. Traditional vector search identifies information based on mathematical similarity, but similarity does not always equal utility; two sentences might be mathematically similar but only one of them contains the specific nuance required to solve a problem. In a multi-session environment, the accumulation of “semantic overlap” acts like a thick fog, making it difficult for the retriever to find the specific “lighthouse” of information needed for a query. Because standard systems lack a mechanism to filter out these echoes, they often prioritize the most frequently mentioned topics rather than the most relevant ones. This bias toward frequency over relevance is a core defect in current memory architectures that leads to a degradation of the user experience as the conversation history grows longer and more complex.

Furthermore, the linguistic nature of human interaction—characterized by the use of pronouns like “it” or “that” and the omission of previously established context—makes raw message retrieval inherently flawed. A single retrieved message such as “I think that’s a great idea” is useless without the preceding three messages that define what “that” refers to. Flat RAG systems often attempt to solve this by retrieving “context windows” of surrounding messages, but this only exacerbates the bloat problem by pulling in even more irrelevant text. There is a fundamental need for a system that can “disentangle” the core knowledge from the conversational fluff, transforming a messy stream of consciousness into a structured repository of distinct, actionable facts. Without such a transformation, AI agents will remain tethered to short-term interactions, unable to scale their intelligence alongside the depth of their relationships with human users.

Architecture and Strategic Retrieval

Implementing a Four-Level Hierarchical Structure

The xMemory framework addresses the inherent messiness of human dialogue by introducing a sophisticated, four-level hierarchical structure that organizes information from the granular to the abstract. At the foundational level are the Raw Messages, which represent the verbatim transcript of the interaction; while these are necessary for high-fidelity recall, they are rarely the most efficient way to store knowledge. To add structure, the framework groups these messages into Episodes, which are time-bound blocks representing a specific interaction or event. This allows the system to maintain a chronological anchor, ensuring that the AI understands when a specific piece of information was relevant. By moving away from a flat list and toward an episodic structure, xMemory mimics the way human memory often categorizes experiences into specific “scenes” or “moments,” making the data much more navigable for automated search algorithms.

The true intelligence of the xMemory architecture, however, lies in its upper two layers: Semantics and Themes. In the Semantics layer, the system performs a distillation process, extracting standalone, reusable facts from the episodic data and discarding the repetitive conversational filler. For instance, if a user mentions several times during a week that they are training for a marathon, the semantic layer stores a single, clean fact: “The user is currently training for a marathon.” These facts are then aggregated into the highest level, the Themes layer, which serves as a conceptual map of the user’s history. Themes might include categories like “Fitness Goals,” “Professional Projects,” or “Personal Preferences.” This top-down approach allows the AI to navigate to a specific area of knowledge quickly and accurately. Instead of searching through every message ever sent, the system identifies the relevant theme first, significantly narrowing the search space and ensuring that the retrieved information is both concise and highly relevant to the current query.

Utilizing Uncertainty Gating for Efficiency

One of the most innovative features of xMemory is its use of Uncertainty Gating to manage the trade-off between speed and detail during the retrieval process. In traditional systems, the retriever always returns a fixed amount of data, regardless of whether the query is simple or complex. xMemory, by contrast, employs a “lazy” retrieval strategy that starts at the most abstract level of the hierarchy. When a user asks a question, the system first attempts to generate a response using only the high-level facts stored in the semantic and theme layers. This creates a “knowledge skeleton” that is often sufficient for general inquiries, allowing the system to bypass the heavy lifting of searching through thousands of raw messages. Because these high-level facts are pre-distilled and diverse, the system can cover multiple topics without redundant repetition, drastically reducing the number of tokens sent to the LLM.

However, if the system determines that the high-level information is insufficient to provide a confident answer, it triggers the Uncertainty Gating mechanism to “drill down” into the more granular levels of the hierarchy. This decision is based on a calculated confidence score; if the semantic facts are too vague or if the query requires specific verbatim evidence, the gate opens, and the system retrieves the corresponding Episodes or Raw Messages. This ensures that the AI only pays the “token tax” for detailed information when it is absolutely necessary for accuracy. By treating semantic similarity merely as a signal for potential candidates and using uncertainty as the ultimate decision signal for retrieval depth, xMemory balances the need for precision with the requirement for operational efficiency. This selective retrieval process not only speeds up the AI’s response time but also preserves the model’s reasoning capabilities by keeping the context window focused on the most critical data points.

Economic Impact and Future Implementation

Balancing Performance Gains with the Write Tax

The implementation of xMemory offers a compelling economic proposition for enterprises, as it significantly shifts the computational burden away from the user-facing interaction. In standard RAG deployments, the primary cost is the “read tax,” which occurs every time a user asks a question and the system has to process a large context window filled with retrieved documents. Experimental data from xMemory shows that by providing a cleaner, more structured context, the framework can reduce token usage by nearly 50% for long-range reasoning tasks. This leads to a direct reduction in API costs and a noticeable improvement in latency, as the generative model has less “noise” to sort through before formulating an answer. For a high-traffic application serving thousands of users, these savings are not just incremental; they represent the difference between a loss-making experimental feature and a sustainable, profitable product.

However, developers must account for what is known as the “write tax,” which represents the computational cost of maintaining the hierarchical memory structure. Unlike a simple vector database that only requires a one-time embedding of text, xMemory requires ongoing background processing to summarize episodes, extract semantic facts, and update theme categories. This involves making auxiliary LLM calls that happen behind the scenes, often as asynchronous tasks. While this increases the upfront processing cost when data is first ingested, most organizations find this trade-off highly beneficial. By spending more on “background thinking” during idle times, the system ensures that the “active thinking” during a live user session is as fast and cheap as possible. This model of periodic consolidation and distillation is essential for any AI system intended to operate over a multi-year horizon, where the sheer volume of historical data would otherwise become unmanageable.

Identifying Ideal Use Cases and Developer Strategies

As we progress through the current year, it has become clear that xMemory is not a universal replacement for all data retrieval needs, but rather a specialized tool for high-persistence applications. For static knowledge bases, such as a company’s employee handbook or a software documentation library, traditional RAG remains the gold standard because the source material is already structured and does not change based on user interaction. xMemory truly shines in scenarios where personalization is the primary goal. This includes long-term educational tutors that must track a student’s progress over an entire curriculum, or executive assistants that manage complex, evolving projects across multiple departments. In these contexts, the ability to “de-duplicate” months of conversation into a coherent set of themes is what allows the AI to remain useful and relevant without becoming bogged down by the weight of its own history.

For engineering teams looking to integrate these concepts into their own agentic workflows, the focus should be on the memory decomposition layer rather than just the retrieval prompts. The quality of the final output depends almost entirely on how well the system can break down a conversation into its constituent facts. Developers are encouraged to implement micro-batching for their background distillation tasks, ensuring that the “write tax” does not interfere with the responsiveness of the live agent. Furthermore, using open-source models for the distillation and categorization tasks can help mitigate the costs of the auxiliary LLM calls, reserving more powerful, expensive models for the final generation phase. By treating memory as an active, evolving component of the AI stack rather than a passive storage bin, developers can create systems that actually become more intelligent and efficient as they spend more time interacting with their users.

Moving Toward Lifecycle Management and Governance

The introduction of hierarchical memory frameworks like xMemory marks the beginning of a broader movement toward comprehensive AI lifecycle management. As agents transition into permanent roles within our digital lives, the industry is forced to move beyond the “collect everything” mentality and start making strategic decisions about what to keep and what to discard. This introduces the concept of memory decay, where an agent can intelligently determine when a piece of information is no longer relevant—for example, if a user changes their preferred programming language or moves to a new city. By using a hierarchical structure, systems can “forget” at the granular level while retaining the higher-level themes, ensuring that the AI’s understanding of the user remains current without losing the broader context of their history. This selective forgetting is a vital step toward making AI behavior more human-like and less like a static database.

Moreover, the shift toward structured memory hierarchies provides a new pathway for addressing privacy and data governance concerns in the age of persistent AI. In a flat RAG system, redacting sensitive information often requires a complete re-indexing of the database, which is both time-consuming and prone to error. With a hierarchical approach, privacy protocols can be applied at different levels; for instance, raw messages containing sensitive personal identifiers can be deleted after a set period, while the distilled, non-identifiable semantic facts are preserved for continued personalization. This allows organizations to strike a balance between providing a highly customized experience and adhering to increasingly strict data protection regulations. Ultimately, the future of AI persistence lies in these sophisticated management strategies, where the goal is not just to remember more, but to remember better, ensuring that our digital assistants remain reliable, efficient, and respectful of our privacy over the long term.