The massive gap between a successful laboratory AI prototype and a reliable production agent often comes down to a single, stubborn technical hurdle known as the data tier bottleneck. While large language models have become increasingly sophisticated, the underlying systems that feed them remain trapped in a fragmented web of specialized databases and fragile synchronization pipelines. The Oracle AI Database emerges as a direct response to this crisis, attempting to redefine the role of the database from a passive storage bin to an active, intelligent foundation for agentic systems. By converging disparate data types into a single transactional engine, this technology seeks to eliminate the latency and inconsistency that currently plague enterprise AI deployments.

This shift is particularly relevant as the industry moves away from simple chatbots toward autonomous agents that must make real-time decisions based on live corporate data. In the current landscape, data teams often struggle to keep vector stores in sync with relational transactional records, leading to “stale” context that can cause an AI to hallucinate or fail. Oracle addresses this by placing the AI’s memory within the same architectural boundary as the data itself. This convergence is not merely a convenience; it is a strategic move to ensure that AI-driven actions are grounded in the same atomicity and consistency that have governed mission-critical financial and operational systems for decades.

The Convergence of Data and Agentic AI

The core principle of this technology lies in the collapse of the traditional multi-database stack into a unified architectural footprint. Historically, developers were forced to use a “best-of-breed” approach, selecting a vector database for similarity searches, a relational engine for transactions, and a graph database for relationship mapping. This created a complex web of movement where data was constantly being copied and transformed. The Oracle AI Database replaces this fragmented model with a single engine designed to handle these diverse workloads simultaneously, allowing developers to query vectors alongside relational tables without the overhead of data migration.

The significance of this advancement becomes clear when considering the operational requirements of production-grade AI. Most failures in the field occur because the data used to “ground” the AI is out of sync with the actual state of the business. By embedding vector capabilities directly into the transactional engine, Oracle ensures that the moment a row is updated in a sales table, the corresponding vector representation is immediately available for the AI to reference. This eliminates the “sync lag” that has historically limited the reliability of Retrieval-Augmented Generation (RAG) and other AI architectures.

Architectural Innovations and Core Components

The Unified Memory Core and ACID Compliance

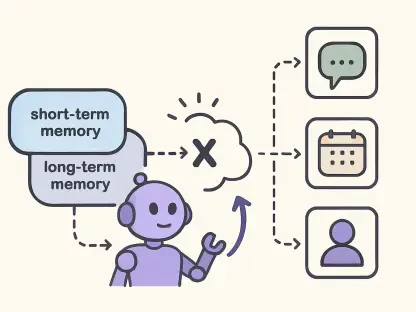

At the heart of this system is the Unified Memory Core, a breakthrough that allows a single engine to process vector, JSON, graph, and relational data under a shared set of rules. This is not a collection of separate modules bolted together, but a reimagined engine where every data type benefits from ACID properties—Atomicity, Consistency, Isolation, and Durability. This means that an AI agent’s memory is treated with the same level of integrity as a bank transaction. If a query fails or a system crashes, the state of the AI’s context remains consistent, preventing the corruption of logic that often occurs in stateless agent frameworks.

Maintaining this level of consistency across all data types removes the need for complex “glue code” that developers previously used to synchronize different systems. Moreover, by housing the memory in the same architectural space as the data, the system achieves “memory-data proximity.” This proximity reduces the computational distance an agent must travel to retrieve context, resulting in faster response times and a more seamless user experience. It effectively turns the database into the primary “brain” for the agent, ensuring that every decision is based on the most current and secure version of the truth.

Vectors on Ice and Lakehouse Integration

Acknowledging that modern enterprises distribute their data across various environments, Oracle introduced “Vectors on Ice.” This feature allows the database to create vector indexes on external Apache Iceberg tables without requiring the data to be moved or ingested into the Oracle environment. This is a vital bridge for organizations that utilize open data lakehouse architectures. It enables native vector searches on massive datasets residing in low-cost cloud storage, allowing an AI agent to reason across historical archives and real-time operational data in a single, unified query.

The performance characteristics of this integration are particularly impressive because the indexing happens locally while the data remains remote. This “in-place” processing avoids the massive egress costs and security risks associated with migrating petabytes of data for AI training or inference. Furthermore, as the underlying Iceberg tables are updated by other applications, the vector indexes refresh automatically. This creates a hybrid ecosystem where the database serves as a sophisticated control plane for an organization’s entire data estate, regardless of where that data physically resides.

The Autonomous AI Vector Database Service

To make these high-end capabilities accessible to a wider range of users, the system includes a “free-to-start” managed service known as the Autonomous AI Vector Database. This offering is designed to democratize access for developers who might otherwise turn to specialized, standalone vector stores. By providing a managed environment that handles patching, scaling, and tuning automatically, Oracle lowers the barrier to entry for building sophisticated RAG applications. It offers a low-friction entry point that does not compromise on the underlying enterprise-grade features.

The strategic significance of this service lies in its upgrade path. Many AI projects begin as simple experiments that only require basic vector storage, but as they evolve, they inevitably need to incorporate relational data or time-series analysis. While standalone vector databases often hit a “functional wall” at this stage, the Autonomous service allows for a seamless transition to a full-featured converged database. This “one-click” scalability ensures that architectural decisions made during the prototyping phase do not become technical debt as the application grows in complexity.

Shifting Paradigms in the AI Data Landscape

The emergence of this technology coincides with a broader shift in the AI industry from stateless frameworks to stateful management. Early AI applications were largely ephemeral, treating every interaction as an isolated event. However, as organizations deploy agents for complex tasks like supply chain optimization or customer support, the need for persistent, reliable memory has become paramount. The move toward database-driven memory management represents a fundamental change in how AI state is handled, prioritizing long-term consistency over temporary convenience.

Furthermore, we are witnessing a marked departure from the fragmented “best-of-breed” stacks that dominated the early 2020s. The industry is beginning to realize that the overhead of managing a dozen different database types outweighs the marginal performance benefits of specialized engines. The convergence trend, championed by this technology, suggests a future where the database engine is a versatile platform capable of adapting to any data shape. This consolidation simplifies the developer experience and significantly reduces the surface area for security vulnerabilities and operational errors.

Real-World Applications and Agentic Implementations

In high-concurrency industries such as finance and global logistics, real-time data accuracy is not optional; it is a prerequisite for survival. For example, a supply chain agent must be able to cross-reference real-time shipping vectors with historical relational data and graph-based network maps to reroute cargo during a disruption. The Oracle AI Database provides the low-latency environment necessary for these multi-dimensional queries to execute in milliseconds. This enables a level of automated decision-making that was previously impossible due to the lag between disparate data systems.

Security and standardization are also addressed through the implementation of the Model Context Protocol (MCP) Server. This protocol acts as a standardized interface, allowing external AI agents to securely interact with corporate data without requiring custom integration code. Because the database enforces row-level and column-level access controls at the source, the security policies of the enterprise are automatically applied to the AI. This ensures that an agent cannot inadvertently access sensitive executive salaries or proprietary trade secrets, even if the model itself is prompted to do so by an unauthorized user.

Technical Hurdles and Market Obstacles

Despite its architectural strengths, this technology faces significant pressure from the open-source community and specialized cloud rivals. Platforms like Postgres have rapidly integrated vector capabilities, offering a familiar and highly extensible alternative for developers who prefer an open ecosystem. Meanwhile, cloud-native giants like Snowflake and Databricks are aggressively expanding their AI toolsets, leveraging their existing dominance in data warehousing to capture the agentic AI market. Oracle must prove that its converged approach offers enough unique value to justify its premium enterprise positioning.

Another significant challenge lies in the inherent complexity of managing distributed data estates. While “Vectors on Ice” provides a bridge to external tables, many organizations have data scattered across multiple cloud providers and dozens of third-party SaaS platforms. Creating a truly unified view of these siloed environments remains a daunting task. The difficulty of ensuring consistent performance and security across these boundaries could hinder the adoption of a centralized database model, especially in organizations that have already committed to a multi-cloud strategy.

The Future of Mission-Critical AI Systems

Looking ahead, the role of the database is poised to evolve from a simple repository to the primary “state manager” for autonomous agents. As memory-data proximity becomes the standard, we can expect to see agents that are more responsive, more accurate, and more capable of handling complex, long-running tasks. The integration of AI governance directly into the data tier will likely become a cornerstone of corporate policy enforcement, ensuring that AI behavior remains aligned with legal and ethical standards without requiring manual oversight at every step.

Future developments will likely focus on further reducing the friction between data storage and model execution. We may see more advanced “push-down” logic, where certain AI reasoning tasks are performed directly within the database memory to minimize data movement even further. This evolution would solidify the database’s position as the mission-critical foundation of the enterprise, transforming it into an active participant in the AI reasoning process rather than just a source of information for external models.

Summary of the Oracle AI Database Evolution

The technological trajectory of the Oracle AI Database demonstrated that the most effective way to scale enterprise AI was by dismantling the silos that separated vector logic from transactional reality. By integrating these capabilities into a single, ACID-compliant engine, the system successfully addressed the “DevOps nightmare” of maintaining fragmented data pipelines. This consolidation provided a more secure and consistent environment for agentic systems, allowing organizations to move beyond experimental chatbots and toward autonomous agents capable of performing complex business functions with high reliability.

The final assessment of this evolution showed that Oracle’s bet on a converged architecture was largely validated by the market’s need for governance and performance. While competitors offered specialized alternatives, the value of a “single version of truth” became the deciding factor for mission-critical deployments. As AI agents continue to mature, the focus will likely shift toward more deeply integrated governance and even tighter proximity between data and reasoning. Ultimately, this technological shift established a new industry standard where the database acts as the essential anchor for all intelligent corporate operations.