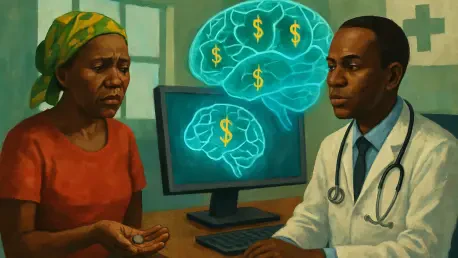

The transition from a manual health insurance system to a digital, automated framework in Kenya was supposed to herald a new era of universal coverage where no citizen would be left behind. However, the implementation of the Social Health Authority has sparked a heated debate regarding the ethics of using machine learning to determine the financial contributions of the population. While the government maintains that the new system ensures fairness by assessing wealth through proxy indicators, critics argue that the underlying algorithms are fundamentally flawed. The shift toward an automated premium assessment model has created a barrier for many who were previously able to access basic services but now find themselves locked out by a calculation they do not understand. As the digital infrastructure takes center stage in public policy, the tension between administrative efficiency and the lived reality of the nation’s most vulnerable citizens continues to intensify, raising questions about the future of equitable healthcare.

The Algorithmic Architecture of Social Health

Evaluating Household Assets Through Predictive Modeling

The core of the new healthcare funding strategy relies on a sophisticated yet controversial machine learning model designed to estimate the annual income of households that lack formal salary records. Because a significant portion of the Kenyan population works in the informal sector, the Social Health Authority utilizes a predictive tool that analyzes specific living conditions to assign a wealth score. This includes assessing the type of materials used for roofing, the availability of modern sanitation facilities, and the ownership of various household assets. By processing these disparate data points, the algorithm generates a predicted income figure, which then dictates the mandatory insurance premium a family must pay to access public medical services. The reliance on such proxies is intended to bypass the challenges of self-reporting income, yet it assumes a direct correlation between physical assets and liquid cash flow that often does not exist in reality.

The complexity of these automated assessments is compounded by the lack of transparency regarding the weights assigned to different variables within the model. For instance, a family living in a house with a permanent roof might be classified as middle-income by the algorithm, even if that roof was a one-time inheritance or built during a period of relative prosperity that has since passed. This “black box” approach to social welfare management leaves citizens with little understanding of why their premiums are set at specific levels. When the AI determines a contribution amount, it does so without considering the volatility of informal labor or the sudden economic shocks that can deplete a household’s resources. Consequently, the digital tool often reflects a static snapshot of wealth rather than the dynamic financial struggles faced by those living on the margins, leading to a disconnect between the system’s output and the user’s actual ability to pay.

Structural Biases and the Regressive Premium Gap

Independent data reconstructions and peer-reviewed analysis have uncovered a troubling trend within the current algorithmic framework, suggesting that the system is inadvertently regressive. Researchers have found that the AI consistently overestimates the earnings of the poorest deciles of the population while providing a relatively lower financial burden for the wealthiest citizens. This bias stems from the way the model interprets asset indicators; it fails to account for the high cost of living and the minimal disposable income available to low-wage earners after meeting basic needs. As a result, many households are presented with insurance bills that exceed their total annual earnings, a mathematical impossibility that highlights a significant “error by design.” This algorithmic skewing means that those who need the most support from a social health fund are the ones being asked to contribute a disproportionately large share of their meager resources.

The persistence of these errors, even after internal reviews suggested adjustments, points to a deeper systemic issue in how automated governance is being deployed. When the logic of the algorithm is prioritized over empirical economic data, the result is a digital divide that translates into a life-or-death health crisis. The wealthy, who possess formal documentation and stable assets, find the system easy to navigate and relatively affordable. In contrast, the rural poor and urban slum dwellers face a rigid machine that does not recognize their precarious status. This creates a scenario where the very technology meant to broaden access serves as a gatekeeper that excludes those it was designed to protect. The failure to mitigate these biases suggests that the rush toward modernization may have overlooked the essential requirement of social equity, leaving a large segment of the population in a state of medical insolvency.

The Human Consequences of Automated Governance

Real-World Impacts on Medical Access and Survival

For many Kenyan families, the introduction of the automated premium system has transformed healthcare from a right into an unattainable luxury. Community health promoters report a growing number of cases where individuals have been forced to abandon chronic treatments, such as dialysis or chemotherapy, because they cannot meet the AI-determined insurance costs. The rigid nature of the digital platform means that if a payment is not made in full, the citizen is often blocked from the entire healthcare network, regardless of their previous contributions or medical history. This binary “all or nothing” approach ignores the nuances of poverty, where a family might have enough for food one month but nothing left for a premium. The stories of citizens choosing between basic nutrition and medical coverage illustrate the grim reality of a system that prioritizes algorithmic consistency over human compassion and public health outcomes.

The lack of a robust appeals process further exacerbates these hardships, as those who attempt to challenge their assigned premiums often find themselves trapped in an administrative loop. When a citizen identifies an error in their assessment, the pathway to correction is frequently opaque, involving multiple digital layers that offer little in the way of human intervention. Unexplained denials and technical glitches are common, leaving many with no choice but to opt out of the system entirely. This mass exclusion has a cascading effect on the national health landscape, as preventable illnesses go untreated and maternal mortality rates face the risk of rising among those who can no longer afford hospital births. The transition to an automated system was marketed as a way to streamline services, but for those on the ground, it has become a barrier that obscures the fundamental goal of providing care to every citizen.

Ethical Considerations and the Future of Digital Welfare

The deployment of AI in Kenya’s healthcare sector is part of a broader global trend where governments utilize automation to manage public resources and maximize administrative efficiency. However, the Kenyan experience serves as a cautionary tale about the dangers of prioritizing technology over ethical oversight and human-centric design. While AI can certainly process vast amounts of data more quickly than human bureaucrats, it lacks the ability to understand context or exercise empathy in cases of extreme hardship. The reliance on automated systems without sufficient safeguards or transparent auditing mechanisms creates a high risk of institutionalizing inequality. As other nations look toward similar digital transformations, the Kenyan case highlights the necessity of embedding social justice into the code itself, ensuring that technology serves as a bridge rather than a wall between the government and its people.

Moving forward, the focus must shift from merely implementing technology to ensuring its accountability and responsiveness to the public it serves. This requires a fundamental redesign of the feedback loops within the Social Health Authority, allowing for rapid human intervention when the algorithm produces clearly erroneous results. Furthermore, the criteria used to assess wealth must be made public and subjected to regular external audits to prevent the perpetuation of regressive biases. If the goal is truly universal healthcare, then the digital infrastructure must be flexible enough to accommodate the economic realities of all citizens. The current trajectory suggests that without significant reform, the promise of affordable care will remain an elusive dream for many, buried under the weight of an unyielding and flawed automated system that prioritizes data points over human lives.

Path Toward Inclusive Digital Transformation

The initial rollout of the automated premium system demonstrated that technological efficiency cannot replace the necessity of a social safety net grounded in economic reality. For the Social Health Authority to achieve its intended goals, the government must move toward a hybrid model that combines the speed of AI with the nuance of human oversight. This involves establishing local committees that have the authority to override algorithmic decisions based on verified physical evidence of hardship. Additionally, the underlying data sets used for training the predictive models should be updated to reflect the most current economic conditions, ensuring that the proxies for wealth are accurate and fair. By integrating these corrective measures, the administration could restore public trust and ensure that the digital transition does not come at the expense of the nation’s health.

The long-term success of Kenya’s healthcare reform was dependent on a commitment to transparency and the active participation of the citizens most affected by these changes. Policy leaders recognized that an algorithm is only as good as the values it is programmed to uphold, leading to a renewed emphasis on ethical AI development within the public sector. Continuous monitoring of the system’s impact on different socioeconomic groups allowed for the identification and correction of biases in real time, preventing the systemic exclusion of the poor. By prioritizing equity over simple administrative convenience, the healthcare system began to fulfill its promise of universal coverage. This shift ensured that technology functioned as a tool for empowerment rather than a mechanism for financial gatekeeping, ultimately strengthening the social contract between the state and its people.