Enterprises kept building sharper models and flashier demos while production lines stalled under brittle glue code, vanished state, and opaque errors that no dashboard could explain before the next incident hit. That mismatch—between eye-catching proofs of concept and the unglamorous grind of running complex, auditable automations—created a new center of gravity: orchestration that behaves like real software. Mistral’s Workflows steps into that vacuum not as another agent toy, but as a production control layer that treats AI as a component inside governed, stateful processes.

Context, Stakes, and the Shift From Models to Operations

The market’s question shifted from which foundation model to how to operate reliably at scale. Most enterprises already have access to competent models—often several—and can swap them in many tasks. What stalls progress is running multi-step, stateful jobs that survive failures, keep humans in the loop without burning compute, and leave a trail a regulator can trust. This is where orchestration dictates outcomes more than raw parameter counts.

Mistral frames Workflows as the missing middle for that reality: a code-first, production-grade layer embedded in Studio that choreographs deterministic business rules with probabilistic model calls. The premise is straightforward yet consequential: make AI steps behave like disciplined software primitives with retries, timeouts, and traceability, then let developers compose them into processes that audit as cleanly as traditional systems.

What It Is: A Code-First, Durable Orchestrator for AI Systems

At its core, Workflows is a developer-oriented orchestration engine for multi-step AI tasks that mixes deterministic code with LLM-driven steps under explicit governance. Engineers author flows in the Python SDK, define inputs and outputs, call models per step, branch on results, and invoke approvals that pause computation without cost. This code-first stance is not an aesthetic choice; it is what enables testing, versioning, CI/CD, and change control to fit into established software practices.

Crucially, the system does not treat LLMs as magic black boxes. Each call is a typed step with contracts, validations, and fallbacks. That framing lets teams decide when rules should win over model output, when to escalate to a human, and where to record the reason for a decision. The result is a workflow that behaves predictably even when a model is non-deterministic.

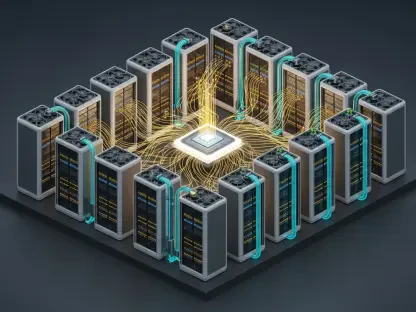

Durable Execution: Temporal as Reliability Backbone

Mistral built Workflows atop Temporal, the durable execution engine that underpins fault-tolerant, long-running processes at companies like Netflix, Stripe, and JPMorgan Chase. Temporal’s semantics—event-sourced state, transparent retries, timeouts, idempotency, and resumability—map directly to AI agent realities such as flaky APIs, intermittent tool errors, or human approvals that take hours.

Where Temporal stops, Workflows adds AI-specific layers. Streaming input/output supports conversational steps and token-efficient prompts. Rich payload handling accommodates large documents and embeddings without choking the orchestration plane. Multi-tenant isolation and deep observability expose everything from branch decisions to retry causes, and all of it lands in OpenTelemetry for the enterprise monitoring stack. The point is not to reinvent Temporal, but to extend it so AI steps inherit durability instead of simulating it with ad hoc retries.

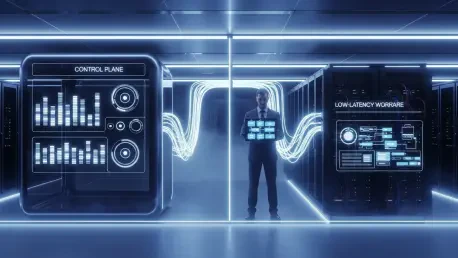

Control Plane vs. Data Plane: Sovereignty by Design

Workflows separates the control plane (orchestration logic and metadata) from the data plane (execution workers and data-adjacent code). Orchestration can be managed by Mistral, while execution runs near or within customer perimeters, reducing data movement and meeting residency mandates. For finance, healthcare, and logistics, this division isn’t a luxury—it is what unlocks deployment in the first place.

This split also improves performance. Running workers next to the systems they touch trims latency and avoids shuttling sensitive payloads through centralized services. Moreover, if regulators ask where data flowed during a review, the architecture can answer with diagrams and logs rather than hand-waving.

Observability as a First Principle, Not a Bolt-On

Mistral instruments the full path: steps, branches, retries, delays, and human checkpoints are all captured with OpenTelemetry-native traces. That level of visibility matters for three reasons. First, it makes root-cause analysis tractable—engineers can see exactly which decision or dependency broke. Second, it supports compliance with auditable, immutable histories. Third, it enables operational tuning without retraining, because many performance fixes live in orchestration logic rather than in model weights.

This observability posture moves AI systems closer to standard SRE practices. Instead of “the model went weird,” teams can show which step used which prompt, which guardrail blocked tool access, and where a policy enforced a branch or a stop.

Developers First: Why Code Beats Drag-and-Drop

No-code panels tend to look good in demos but struggle with scale, versioning, and nuanced error handling. By leaning into Python, Workflows embraces precision and the tooling developers already use—tests, linters, code review, and release pipelines. That makes flows easier to audit and safer to change. It also widens the space of solvable problems by allowing complex branching and compensation patterns that drag-and-drop canvases rarely express cleanly.

This does not lock out business users. Completed flows can be published to Vibe’s conversational interfaces or agents, where nontechnical teams trigger and monitor them without editing code. The separation preserves durability and auditability while meeting users where they work.

Tools and Agents: Power Under Guardrails

Agentic behavior can be valuable, but only if contained. Workflows connects to tools through MCP (Model Context Protocol), giving standardized access while enforcing strict policies. Admins can declare which tools agents may invoke, at which permission levels, and behind which approval steps. This blend—agents plus guardrails—shifts the story from “agents might go rogue” to “agents operate inside defined corridors.”

The real advantage shows up during exceptions. If an agent encounters a tool failure or ambiguous data, the workflow can branch deterministically—request more context, escalate to a human with a structured task, or run a compensating action—without losing state or inventing a new side channel.

Capabilities That Operationalize Complex AI

Multi-step control flow and branching: Engineers define sequences, conditionals, and compensations that reflect real processes rather than happy paths. If a document fails validation, the system can request resubmission while holding place in the overall state machine.

Human-in-the-loop checkpoints: A single pause-and-resume primitive waits for approvals without burning compute, then resumes deterministically. That predictability removes a common source of cost leakage and state drift in DIY systems.

Connectors, auth, and secrets: Built-in integrations to CRMs, ticketing systems, and operational tools hide repetitive plumbing while secrets management keeps credentials out of code.

Per-step model selection and deterministic logic: Teams choose models per stage, mix rules with LLMs, and validate outputs under typed contracts. If regulations require certain checks to be rule-based, that logic can wrap around model calls rather than replace them.

Governance and policy: Traceability and permissions exist now; finer guardrails for tool access and data boundaries are on the near-term roadmap, giving risk teams more granular control.

Where It Sits in Mistral’s Stack

Workflows is the spine between custom models in Forge and user interactions in Vibe. Forge handles domain-specific training and RL-based fine-tuning, ensuring the right capabilities exist. Workflows turns those capabilities into durable processes, encoding policy and failure handling. Vibe then exposes the assembled flows through conversational UIs and coding agents across devices.

The full-stack benefit is fewer seams. Orchestration knows how the model was tuned and can pass context accordingly; interfaces know how to trigger a process and fetch its trace. This cohesion reduces the “integration tax” enterprises pay for stitching vendors that never quite align on semantics or observability.

Proof in Production: What’s Running Today

Logistics and cargo release show why durability matters. Shared processes span customs checks, dangerous goods classification, and carrier approvals, with humans stepping in only at critical gates. Workflows coordinates these steps, pauses cleanly for sign-off, then resumes exactly. The payoff is faster release cycles and a paper trail that satisfies internal controls and external audits.

Financial services KYC is another fit. High-volume document reviews used to consume analyst hours and yielded errors that were hard to trace. By automating ingestion, classification, and rule validations—with exceptions routed to a reviewer—Workflows compresses review times to minutes while producing auditor-ready logs. The outcome is not just speed; it is a demonstrably safer process.

Customer support in banking highlights risk control. Intent analysis and routing can drift as products, policies, and language evolve. Because routing logic lives in workflows, teams can adjust thresholds, add checks, or change queues without retraining. That decoupling prevents operational regressions from becoming model projects and shortens the time from issue to fix.

Competitive Context: How It Compares and Where It Fits

Hyperscalers bundle orchestration with their clouds—Amazon’s Bedrock AgentCore, Microsoft’s Copilot Studio, Google’s Vertex AI agents, and IBM’s WatsonX—offering convenience where workloads already live. Leading labs like OpenAI and Anthropic ship agent features and benefit from massive developer mindshare. Open-source frameworks such as LangChain, LlamaIndex, and AutoGen provide composable pieces but often leave durability and observability as do-it-yourself tasks.

Mistral competes by specializing. The Temporal foundation confers credible reliability out of the gate. Control/data-plane separation aligns with European sovereignty requirements and global regulated sectors. The full-stack tie-in—Forge for tuning, Workflows for policy and process, Vibe for interaction—cuts integration overhead. The trade-off is ecosystem gravity: hyperscalers make adoption easy if everything else already runs in their clouds, and the biggest labs still capture community energy and prebuilt integrations.

Money, Scale, and What the Numbers Signal

Reported revenue run rate surpassing $400 million and ambitions north of $1 billion this year suggest the platform is not a niche bet. The €1.7 billion Series C in 2025, followed by a reported €2 billion investment led by ASML and $830 million in debt for 13,800 Nvidia chips near Paris, reads as a wager on owning both capacity and speed. The mix of roughly 60% European revenue indicates that sovereignty and locality are not talking points; they are closing deals.

Interpreted for buyers, this momentum means two things. First, Workflows is likely to receive sustained investment in enterprise features rather than stalling at a v1. Second, the European footprint makes it a viable counterweight for organizations that cannot send sensitive data into non-European jurisdictions yet want modern AI capabilities.

Risks, Constraints, and the Cost of Ambition

There are real constraints. Competing with hyperscalers on distribution is hard when procurement favors incumbents and co-location reduces friction. Matching the developer ecosystem of OpenAI and Anthropic will take time and partnerships. The full-stack strategy is powerful but risky; excelling across models, orchestration, and interfaces demands focus and a willingness to prune.

Enterprise sales cycles further complicate the picture. Deep pilots, security reviews, and solution engineering carry high support costs. If roadmap promises—like broader guardrails or simplified managed deployments—slip, credibility with risk teams could wobble. Buyers should evaluate not only features but also the operational maturity of support, SLAs, and migration paths.

Roadmap Signals: What’s Next and Why It Matters

More managed deployment options, including customer VPC variants with Mistral-managed control planes, would lower operational overhead for teams that value sovereignty but lack bandwidth to run workers themselves. Broader authoring access through Vibe could let business operations make small adjustments without breaking durability guarantees, provided policy constraints remain enforceable.

Expanded enterprise guardrails—finer permissions, tooling governance, and tighter data boundaries—are where platform trust is won or lost. As agentic patterns grow more capable, the ability to say “this agent can use these tools under these conditions, with these approvals” will separate pilots from production.

Why Temporal Specifically Strengthens AI Reliability

Durable execution maps neatly to agent patterns. Tools fail; APIs throttle; humans take lunch; regulations require escalations; partial successes need compensations. Temporal’s event-sourced model lets workflows survive that chaos without duct tape. Mistral’s additions—streaming for interactive steps, payload handling for document-heavy tasks, and multi-tenant isolation—close the gap between reliability theory and AI practice.

This matters because many AI outages are orchestration outages in disguise. Backoffs that reset state, human approvals that burn compute, retries that duplicate side effects—these are orchestration failures, not model defects. Temporal makes these failure modes explicit and controllable, and Workflows exposes them through developer-friendly constructs.

Adoption Payoffs: From Pilots to Proven Operations

What enterprises gain, if Workflows performs as designed, is a way out of proof-of-concept limbo. Durable state and observability tame fragility; human-in-the-loop primitives bring governance without sprawl; per-step model choice and rule wrappers give teams control over predictability. With full traces, leaders can pinpoint where models create value, where rules are cheaper, and where humans remain essential.

Cost visibility follows from that instrumentation. Teams can attribute spend to decisions, streamline prompts that overrun value, and place approvals only where risk truly warrants them. In regulated settings, the control/data-plane split supports compliance by design rather than by exception.

Verdict

Mistral Workflows turned the enterprise AI conversation from “how smart is the model” to “how safe and dependable is the system that uses it,” and the choice to stand on Temporal gave it immediate credibility in reliability. The control-plane/data-plane split, OpenTelemetry-native observability, and a code-first SDK positioned Workflows as an operations product rather than yet another agent toy. Real deployments in logistics, KYC, and banking support suggested the design held up under pressure, while the broader Forge–Workflows–Vibe stack reduced integration drag compared with piecemeal alternatives.

Limitations remained: hyperscaler distribution advantages, rival ecosystems with deeper turnkey integrations, and the execution challenge of excelling across a full stack. Even so, the trajectory pointed to a pragmatic path for organizations that needed sovereignty, auditability, and long-running resilience. The most actionable next step for buyers was to pilot a single high-friction process—one that mixes rules, model calls, and approvals—then measure not only accuracy but also failure handling, trace quality, and change velocity. If those metrics improved, Workflows warranted a larger role; if they did not, the gap would reveal where policy controls, connectors, or managed options needed to mature. As a review judgment, Workflows earned a strong recommendation for regulated and reliability-first environments and a qualified one for teams already deeply tied to hyperscaler stacks, with the deciding factor being whether governance and durability outranked convenience in the organization’s priorities.