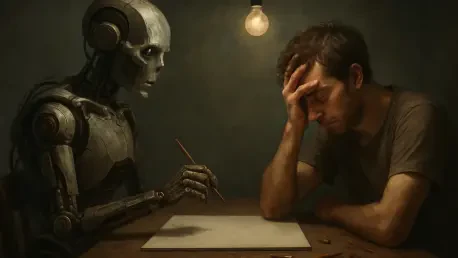

The rapid proliferation of generative artificial intelligence has fundamentally altered the digital landscape by introducing an economic paradox that could potentially destabilize the very foundation of human culture. While these advanced neural networks are celebrated for their ability to synthesize complex information and produce creative outputs, they are entirely dependent on a massive, continuous influx of human-generated content to maintain their accuracy and relevance. This creates a precarious situation where the primary function of the technology—automating the creative process to reduce costs—threatens to undermine the financial and professional incentives that motivate people to produce original art, music, and literature. If the tools designed to mimic human expression eventually replace the creators they learn from, the source of high-quality data will inevitably dry up, leaving the industry in a state of terminal stagnation.

At the technical level, generative systems rely on sophisticated text and data mining techniques to identify and replicate intricate patterns within human aesthetic styles and linguistic structures. This process allows machines to generate content at a scale and velocity that no human professional could ever match, effectively driving down the marginal cost of production to nearly zero. However, a significant portion of this training currently occurs without the explicit consent or compensation of the original authors, resulting in a deeply lopsided market dynamic. The emerging landscape is one where AI-generated outputs directly compete with the specific human works that were used to build the model’s intelligence in the first place. This conflict between rapid technological advancement and established intellectual property rights presents a systemic risk that goes beyond simple legal disputes, threatening the long-term viability of the creative economy.

The Economic Justification for Copyright Protection

The ongoing friction between technological disruption and creative labor is best understood by examining the fundamental economic theories that underpin copyright protection. Intellectual and creative works are classified by economists as “non-rivalrous” goods, meaning that one person’s enjoyment of a symphony or a digital painting does not prevent another person from experiencing it simultaneously. While the initial investment required to write a nuanced novel or compose a film score is exceptionally high in terms of time, education, and resources, the cost of reproducing and distributing that work in the digital age is essentially non-existent. Copyright laws were specifically designed to address this “free-rider” problem by granting creators a temporary legal monopoly, ensuring they can recover their “sunk costs” and profit from their intellectual labor rather than seeing it exploited by entities that contributed nothing to the creative process.

Beyond the purely financial mechanics, there are profound philosophical and social justifications for protecting the fruits of human intellect. Many legal frameworks, particularly those influenced by the civil law tradition, view creative works as an intimate extension of an author’s personality, granting them “moral rights” to protect the integrity of their expression from distortion or unauthorized use. From a social welfare perspective, society enters into a contract with creators, offering them a window of exclusivity as a reward for contributing to the collective cultural heritage. If the rise of uncompensated AI training removes the possibility of such a reward, the social incentive for individuals to spend decades mastering a difficult craft may begin to unravel. This shift would likely lead to a noticeable decline in cultural diversity as the profession of the “artist” becomes financially unsustainable for all but the most privileged.

Market Disruption and the Quality of Labor

The creative marketplace is already witnessing a significant shift in competitive dynamics as AI becomes a viable substitute for human labor in high-volume sectors like marketing copy, stock photography, and basic graphic design. As the total supply of digital content swells due to the high-speed output of automated systems, the market price for individual creative assets is predictably beginning to plummet. While some argue that AI serves as a “productivity-enhancing tool” that allows artists to experiment more rapidly, it simultaneously poses an existential threat to the livelihoods of mid-career professionals who find themselves competing with low-cost, algorithmic alternatives. This pressure does not just affect income; it changes the nature of the work itself, often reducing the role of the human from a primary creator to a mere editor or curator of machine-generated drafts.

If the financial pathway for professional creators continues to erode, the incentive for the next generation to invest in developing specialized artistic skills will logically weaken. This trend creates a long-term risk for a society where high-quality, original content becomes a scarce luxury rather than a public staple. When the economic returns for human creativity diminish to a point of no return, the talent pool inevitably shrinks, leaving the market saturated with derivative, repetitive works that lack the depth of lived human experience. This shift represents a fundamental change in how culture is produced, moving away from human-led innovation toward a model of algorithmic repetition. The loss of this professional class would mean the loss of the “edge cases” and revolutionary ideas that typically drive cultural evolution, as machines are fundamentally designed to predict the average rather than the exceptional.

The AI Creativity Paradox and Model Collapse

The most significant threat to the future of technology is a structural dependency known as the “AI Creativity Paradox,” which suggests that artificial intelligence cannot thrive in a vacuum. Neural networks are not static entities; they require a constant stream of “fresh” human data to remain accurate, diverse, and representative of the evolving world. If AI-driven market forces destroy the human incentive to create, the industry effectively “poisons its own well” by eliminating the very source of its intelligence. Recent research into the phenomenon of “model collapse” illustrates this danger clearly: when AI models are trained on data predominantly generated by other AI systems rather than original human work, the outputs begin to lose their informational richness. Over several iterations, the errors compound, the variety vanishes, and the model eventually becomes a digital echo chamber that produces nonsensical or overly simplistic results.

To prevent this technological stagnation, the legal and regulatory frameworks governing the digital space must evolve to protect the “human-in-the-loop” through structured compensation models. Industry leaders and policymakers should prioritize the development of collective licensing systems, where AI developers pay into a centralized fund that distributes royalties to the creators whose works populate their training sets. Furthermore, implementing mandatory transparency requirements for training data would allow for better tracking of intellectual property use and ensure that the value generated by human data is shared fairly. Moving forward, the industry must recognize that the survival of high-quality artificial intelligence is inextricably linked to the survival of a motivated and financially viable human creative class. Instead of viewing human creators as obstacles to be bypassed, the tech sector should treat them as essential partners whose continued output is the lifeblood of sustainable machine learning.

Future Strategies for a Balanced Creative Ecosystem

The resolution of the AI dilemma was found in the realization that technological progress and human artistry are not mutually exclusive but are, in fact, deeply interdependent components of a modern economy. To move beyond the current impasse, the industry shifted toward a model of “verifiable attribution,” where the provenance of training data is recorded on transparent ledgers to ensure that the original contributors are recognized and remunerated. This approach not only stabilized the creative professional market but also improved the quality of AI outputs by encouraging creators to produce high-value, specialized data specifically for ethical machine learning. By formalizing the relationship between data providers and model developers, the market transitioned from a period of chaotic exploitation to one of structured collaboration, proving that innovation is most sustainable when it respects the labor that makes it possible.

As the digital landscape continued to evolve, the focus shifted toward establishing “safe harbor” protections for human-led projects, ensuring that public grants and cultural funding were specifically directed toward original human expression. This proactive policy prevented the total homogenization of culture by maintaining a vibrant sector of non-algorithmic art that serves as a benchmark for quality. Ultimately, the long-term health of the AI industry was secured by acknowledging that machines can replicate patterns, but humans provide the meaning and the novelty that keep those patterns from becoming obsolete. The key takeaway for the coming decade is that any technology which alienates its own source material is destined for failure; therefore, the most successful AI initiatives were those that actively invested in the human creative ecosystem to ensure a perpetual cycle of innovation and mutual prosperity.