Boardrooms juggling cloud commitments, AI roadmaps, and compliance checklists just saw the ground shift as Microsoft and OpenAI replaced a once-exclusive alliance with a time-bounded, non-exclusive pact that lets OpenAI run natively on rival clouds while Microsoft keeps licensed access through 2032. This reset did more than tidy up a contract. It unlocked cross-cloud distribution of OpenAI’s models, cleared legal tension created by OpenAI’s up-to-$50 billion arrangement with Amazon, and retired a controversial AGI trigger that once tied privileges to an undefined milestone. The net effect is a market where model access behaves more like a utility, distribution becomes a primary differentiator, and enterprises gain leverage to place frontier AI alongside existing security, data residency, and observability controls. What started as a bilateral realignment has already radiated through the cloud stack, model marketplaces, and agent platforms, turning multi-cloud from aspiration into operating principle.

What Changed: From Exclusivity to Optionality

The core change was simple on paper and sweeping in practice: Azure ceased to be the exclusive home for OpenAI’s products and APIs. OpenAI can now deploy and sell its portfolio—including core GPT-class models, embedding endpoints, fine-tuning services, and enterprise offerings—on any major cloud. That means native listings on AWS Bedrock’s model catalog and, by all indications, an on-ramp to Google Cloud’s Vertex AI. The revision ended two-way revenue flows, granted Microsoft a non-exclusive license to OpenAI’s IP that runs through 2032, and clarified each side’s right to market, bundle, and price independently. This moved the relationship from privileged access to predictable interoperability, a shift that matched how large buyers structure risk and redundancy across zones, regions, and providers.

For customers, optionality landed as concrete capabilities, not just rhetoric. OpenAI’s presence on multiple clouds reduces procurement deadlocks tied to single-vendor policies, avoids duplicating identity and governance stacks, and cuts the friction that arose when teams had to hairpin workloads between native services and Azure-only endpoints. For OpenAI, the change removed a ceiling on distribution. Deals that once stalled—because the buyer standardized on AWS PrivateLink, relied on Google’s data governance controls, or needed alignments with Bedrock’s guardrails—now had clean paths to production. Microsoft, meanwhile, traded exclusivity for durable product access and greater clarity on margins, then lined up its own model families and third-party allies to keep control of its AI roadmap even as competitors host OpenAI’s systems.

Why Now: The Amazon Catalyst

Timing hinged on OpenAI’s blockbuster arrangement with Amazon, which braided capital, compute, and distribution. The headline number—up to $50 billion, with $15 billion upfront and additional tranches contingent on milestones—came with obligations that collided squarely with Microsoft’s prior rights. Among them: expanding OpenAI’s AWS usage at massive scale over eight years, designating AWS as the exclusive third-party distribution provider for Frontier, and co-developing stateful runtime capabilities on Bedrock. Microsoft publicly reiterated Azure’s exclusivity for “stateless OpenAI APIs” the same day, creating a contradiction that legal teams could not paper over. Reports that Microsoft considered litigation underscored the urgency to renegotiate or face a protracted stalemate.

The restructured pact resolved that deadlock. By affirming OpenAI’s right to sell and deploy on any cloud and converting Microsoft’s IP access to a non-exclusive license running to 2032, the companies retroactively reconciled the Amazon commitments. Amazon’s leadership quickly signaled operational follow-through, pointing to OpenAI models landing on Bedrock and a stateful runtime suitable for enterprise-grade agent workflows. From a market perspective, this also validated AWS’s “many models, one control plane” stance, complementing Anthropic’s presence on Bedrock and reinforcing the idea that model quality plus distribution flexibility beats either in isolation. The immediate catalyst, then, was less about animus than about eliminating conflicting promises before they metastasized into customer confusion and courtroom theater.

Money Flows and Mechanics

The financial plumbing changed as meaningfully as the technical topology. Microsoft no longer pays OpenAI when Azure customers invoke OpenAI models through Azure OpenAI Service. That outbound share had quietly taxed Microsoft’s margins on fast-growing Copilot and app workloads. In return, OpenAI continues to pay Microsoft a 20 percent revenue share through 2030, now with an undisclosed cap. The cap matters: if OpenAI’s revenue keeps compounding, the ceiling could arrive well before 2030, after which payments would cease. Microsoft also retains roughly a 27 percent stake in OpenAI’s for‑profit arm, anchoring continued exposure to OpenAI’s upside independent of direct revenue splits.

These mechanics create nuanced trade-offs. Microsoft yielded privileged commercial positioning but gained near-term margin clarity on Azure-hosted usage and preserved product continuity via the IP license. OpenAI accepted constrained outflows until the cap or 2030, but in exchange removed a structural sales tax: being forced to lead with Azure even when a buyer’s estate was anchored to AWS Direct Connect, Google Cloud’s Assured Workloads, or a regulated hybrid. The simplification also reduces accounting complexity across joint offerings, curbs incentives for gaming traffic paths, and sets the stage for cleaner price comparisons when customers evaluate equivalent SKUs—like GPT‑4.x on Bedrock versus Azure OpenAI—under consistent SLAs and egress polices.

The AGI Clause Gets Retired

One of the most unusual elements of the original deal—a contractual trigger tied to OpenAI’s internal declaration of AGI—fell away. That mechanism once aimed to balance safety governance with commercialization by letting OpenAI’s nonprofit oversight throttle rights if systems crossed a certain capability threshold. In practice, the clause created perverse incentives and ambiguity: both sides benefited from keeping AGI “not yet attained,” while the industry’s rhetoric around AGI blurred scientific, marketing, and policy lines. Replacing this tripwire with calendar dates and explicit limits acknowledged that no shared, operational definition could bear legal weight.

The implications are wider than contract housekeeping. Moving from a philosophical milestone to fixed timelines signaled a broader industry shift toward governance that is auditable, enforceable, and less subject to definitional drift. Microsoft’s license through 2032 became detached from hype cycles and tied instead to predictable renewal horizons. OpenAI retained freedom to message research progress without triggering unintended commercial consequences. Regulators and enterprise risk committees, meanwhile, received a clearer basis for oversight: obligations that can be calendared, monitored, and tested rather than argued as semantics. In short, the AGI clause’s retirement converted an abstract safety proxy into concrete operational guardrails.

Microsoft’s Strategic Position

Microsoft ended up with fewer exclusive levers but a broader set of hedges. It remains OpenAI’s primary host for significant portions of training and inference, holds a sizable equity position, and keeps a non-exclusive IP license that secures continuity across Bing, Office, GitHub Copilot, Dynamics, and the Azure OpenAI Service. In parallel, Microsoft doubled down on in-house models like Phi for compact, efficient tasks and MAI for specialized agentic behaviors, while deepening ties with Anthropic to diversify supply and feature options. This portfolio approach reduces single-supplier risk and gives Microsoft bargaining power as model classes proliferate and customer segments fragment by latency, privacy, and cost profiles.

Commercially, the move clarified margins where it mattered most. By ending outbound revenue share, Microsoft improved the unit economics of AI-enriched workloads that ride on Azure and M365, from code completions in GitHub Copilot to retrieval-augmented generation in Dynamics. Strategically, the company leaned into reliability, security posture, and integration depth as differentiators when exclusivity no longer applied. Expect continued investment in Azure’s accelerator diversity—NVIDIA H‑series, AMD Instinct, and Azure Maia—and in features like confidential computing, regional isolation, and workload-aware autoscaling. The message is straightforward: if buyers can run OpenAI anywhere, Azure must win on performance envelopes, compliance primitives, and total cost predictability.

OpenAI’s Strategic Position

OpenAI gained what it sought most: distribution freedom and the credibility that comes from being cloud-neutral in buyer conversations. The ability to land directly on AWS Bedrock, plug into Google Cloud’s Vertex AI, and interoperate with native identity, logging, and policy frameworks stripped away a persistent sales blocker. Enterprises that standardized on AWS Organizations or rely on Google’s Access Transparency program can now adopt OpenAI models without re-plumbing core controls. This aligns with how global firms stage migrations, handle regulated data, and evaluate vendor concentration risk across critical systems.

The trade-offs were manageable relative to the upside. A capped 20 percent share to Microsoft through 2030 functions as a growth toll but not a strategic constraint. OpenAI also reduced dependency on a single provider across capital, compute, and go-to-market. Signals that it may lease or build additional dedicated capacity introduced another future lever: the possibility of negotiating from a position of multi-hyperscaler strength while exploring specialized infrastructure for stateful agent runtimes and fine-tuning at scale. Most importantly, neutrality reframed OpenAI’s brand in the enterprise. Rather than being perceived as “Azure-first,” it now reads as a model supplier capable of meeting customers in their existing operational fabric.

What Enterprises Gain: Choice and Portability

For large buyers, the benefits were immediate and practical. Native access to OpenAI models on AWS Bedrock lets teams route traffic through existing VPC endpoints, attach Guardrails for policy enforcement, and monitor behavior with CloudWatch—no cross-cloud hops or duplicated audit trails. On Google Cloud, Vertex AI’s Model Garden and Extension framework provide similar plumbing: model hosting under established IAM, Vertex AI Monitoring for drift and latency, and seamless integration with BigQuery and Looker. This matters when procurement requires alignment with least-privilege policies, data residency, or sector-specific rules like FedRAMP High or HIPAA Business Associate Agreements that are already in place with a preferred cloud.

The shift also shortened the road from proof-of-concept to production. Engineering teams no longer needed to invent workaround architectures—data egress to Azure, temporary federation of identities, bespoke logging adapters—just to pair OpenAI outputs with in-place services. Instead, they can leverage native observability stacks, existing budget tags, and chargeback models. That improves time-to-value for scenarios like customer support deflection, code migration assistants, or marketing content generation, where business stakeholders expect results in quarters, not years. Moreover, multi-region choices across providers let architects tune for latency-sensitive workflows, choose specific GPU or NPU profiles, and hedge reliability by failing over between clouds during brownouts or quota crunches.

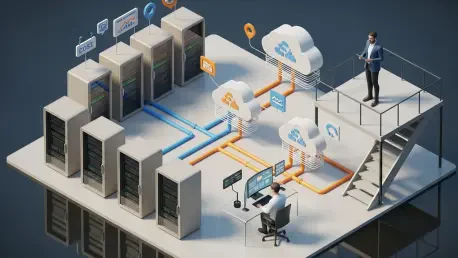

Market Realignment: Multi-Cloud Becomes Default

With OpenAI decoupled from single-cloud exclusivity, model access began to look like modern storage or CDN strategy: pick the best option per workload and enforce policy centrally. AWS strengthened Bedrock’s “many models, one control plane” position—Anthropic’s Claude, Cohere, Meta’s Llama variants, and now OpenAI—wrapped with shared safety filters, usage policies, and billing. Google Cloud’s Vertex AI can present a unified tooling surface where buyers weigh Gemini alongside third-party models and orchestrate retrieval over AlloyDB, Bigtable, or Dataplex-governed lakes. Microsoft sustains its own catalog by pairing OpenAI access with Anthropic, in-house models like Phi, and tight alignment to Microsoft 365 data boundaries and E5 compliance features.

Agent platforms and stateful runtimes shifted from roadmap items to competitive battlegrounds. OpenAI’s Frontier, now greenlit for AWS distribution, targets long-running, auditable agents that coordinate tools and data over hours or days. The co‑development of stateful runtime on Bedrock suggests features like durable memory slots, resumable toolchains, and fine-grained event logs that legal and compliance teams can actually sign off on. Microsoft, for its part, has pressed agentic patterns into Copilot Studio and Azure AI Studio with orchestration, grounding, and content filters bound to Purview. Google emphasizes Vertex AI Agents with Extensions and secure tool execution. Across providers, the contest increasingly turns on observability, governance, and integration depth rather than only leaderboard scores.

Practical Path Forward for Buyers

The immediate priorities had been clearer than the headlines suggested. Enterprise leaders should have inventoried AI workloads by data sensitivity, latency profile, and failure tolerance, then mapped each to the cloud where guardrails and SLAs best matched the risk. Procurement teams should have updated vendor assessments to account for OpenAI’s availability on Bedrock and, when applicable, Vertex AI, removing Azure-only caveats that formerly blocked adoption. Architecture groups should have standardized request patterns—RAG templates, prompt injection defenses, structured outputs—so that swapping providers for capacity or cost did not trigger rewrites. Security teams should have bound model access to existing secrets stores and policy engines rather than building parallel control planes.

Strategy discussions also benefited from concrete sequencing. Pilots should have targeted low-regret use cases with clear metrics—ticket resolution deflection, code review acceleration, or knowledge search success—while legal set data handling terms that applied uniformly across clouds. For agentic systems, teams should have enforced state boundaries and auditability up front, using Bedrock Guardrails, Azure AI Content Safety, or Vertex AI’s safety settings as defaults. Finally, finance should have established cross-cloud cost visibility so leaders could compare like-for-like SKUs and adjust placement based on utilization, egress, and accelerator availability. Taken together, these steps turned the Microsoft–OpenAI restructuring from industry theater into an operational advantage grounded in dates, dollars, and delivery.