Screens flicker, order books refill, liquidity pivots, and a single millisecond stretches so long that price, flow, and intent rearrange themselves before most models complete a batch. In that moment, a “price” is not a number; it is a rolling conversation stitched from trades, quotes, funding shifts, and on-chain moves. Treat it as a snapshot, and the story disappears.

Yet decisions still need to land on time. Custodians must route orders, card programs must approve spend, and treasurers must rebalance. The cost of being a beat late is no longer theoretical; in a market that can jump on small flows, lag magnifies error while speed without context hardens false certainty.

Nut Graph

The stakes are immediate because crypto markets now run as live feeds at institutional scale. Ethereum alone processes roughly 3 million transactions a day with more than 1 million active addresses, while total market capitalization hovered near $3 trillion by the end of 2025 after briefly topping $4 trillion earlier that year. That density forces a shift from batch analytics to streaming-first systems where AI interprets continuous inputs and explains them clearly.

This story follows the redesign of models, data pipelines, and governance that turns raw noise into signals. It examines fragile causality, skewed datasets, and operational demands, then shows how AI earns trust as a translator—surfacing what matters, when it matters, without overstating confidence.

Body

A streaming market recasts fundamentals. Rolling windows, event-time joins, and late data handling replace nightly aggregates, while feature freshness competes with stability. Decay-aware features and adaptive baselines allow small, recent changes to outweigh older trends, but only if pipelines produce in-time features and inferences under strict latency budgets. Freshness without context, however, invites spurious conviction—an alert can be perfectly prompt and perfectly wrong.

High-frequency context sharpens the trade-offs. At this scale, compute and memory spend must be translated into on-time outputs, not just throughput. Systems that fail to watermark data or deduplicate bursts quietly corrupt features; schemas that drift mid-session can blind downstream models. Teams that survived these hits learned to enforce feature store contracts, maintain replay queues, and support rapid rollback when an upstream feed changes shape.

Market behavior adds another layer of complexity. Feedback-prone conditions often resemble “negative gamma,” where movement begets more movement and market makers hedge into trends rather than dampen them. Binance research highlighted that co-moves across assets commonly align in direction but not in magnitude, breaking naive correlation models. Single-signal bets suffer in this terrain, so ensembles, regime detectors, and uncertainty-aware outputs become table stakes.

Distribution skews modeling choices. With Bitcoin dominance around 59% and small caps representing roughly 7.1% of market share, signals concentrate in thick markets while thin markets suffer sparsity and higher noise. Without correction, models overweight frequently observed patterns and under-serve the long tail. Hierarchical architectures—separate heads for deep-liquidity and thin-liquidity assets—paired with reweighting and stratified sampling reduce dominance bias and clarify when to issue or suppress signals.

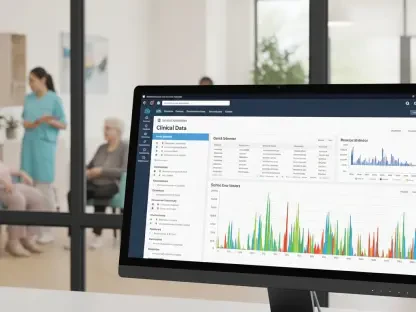

AI’s role shifts from decision-maker to interpreter. In blended environments—payments, cards, treasury—systems increasingly need structured signals, anomaly scores, and narrative context that operators can audit. Crypto card usage offered a visible example: volumes expanded roughly five-fold in 2025, reaching about $115 million in January 2026. The intersection with real-world spend demanded models that could read shifting conditions without whipsawing user experience; throttle controls and abstain policies protected trust.

Institutional participation tightened the bar. “Institutions expect high standards of compliance, governance, and risk management,” noted Richard Teng, Binance Co-CEO, in February 2026. That expectation extended across lineage, versioning, and reproducibility, and it reframed model changes as controlled events with auditable approvals. Model cards, rationale snippets, and performance slices by asset tier evolved from niceties to requirements, while continuous monitoring tracked drift, alert fatigue, and regime shifts.

Field-tested pipelines echoed these lessons. Event-time processing, bounded state for features, and incremental computation kept latency predictable. Low-latency inference stacks introduced A/B shadowing and rollback levers to derisk upgrades. Post-incident playbooks emphasized blameless reviews, clear escalation paths, and policy diffusion so the same failure mode did not reappear under new market stress.

Conclusion

The path forward demanded concrete moves rather than slogans. Teams prioritized streaming-first architectures with strict SLOs across ingest, feature, infer, and notify; introduced multi-signal ensembles that blended order books, funding, flow metrics, and on-chain heuristics; and deployed regime switches that toggled between momentum, mean-reversion, and liquidity-shock modes. Prediction intervals and abstain policies curbed overreaction when regimes fractured.

Bias management proved equally actionable. Hierarchical models handled thick and thin markets differently; reweighting and stratified sampling countered dominance; and explicit coverage thresholds clarified when silence beat a shaky alert. Governance closed the loop with versioned data, reproducible runs, and operator-facing explanations that supported audits.

In the end, the most durable value came when AI acted less like an oracle and more like a translator—turning torrents of real-time noise into timely, intelligible signals that operators and automated workflows could trust. Built this way, AI reduced latency penalties, contained model risk, and made continuous markets legible enough to support decisions that depended on both speed and restraint.